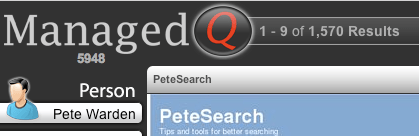

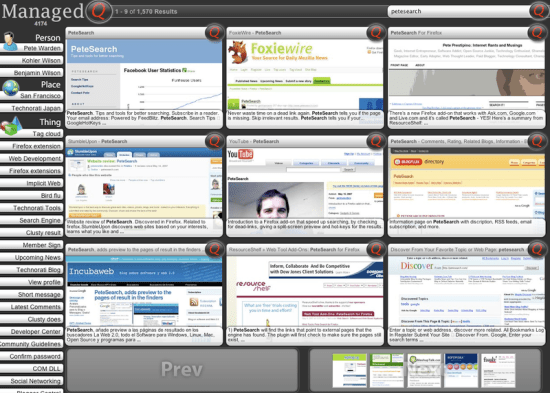

PeteSearch is a semantic web application, it’s taking web pages designed to be read by humans and turning them into data that can be processed by software. It’s a pretty specialized application, focused purely on pages that list external sites associated with particular search terms, but the wide range of sites I’m able to support using the same code shows that my approach is robust.

The model I use for search pages is that they must contain three pieces of information:

- A list of search terms, embedded in the URL

- A list of external sites associated with those terms

- A link to the next page of results

All of the recognition of these is data-driven, using a definition for each engine that includes

- What is the host name and action used by the engine, so we can tell what’s a page of search results. For google that’s google.com/search

- What precedes the search terms in the URL, eg for google that’s q=

- Which external sites are linked to, but not part of the results, eg google links to answers.com for definitions of words

- Which words indicate an external link that isn’t part of the results, eg google links to the cached results on numbered servers using Cached as the link’s text

- Which word is used for the link to the next page of results. For English that’s almost always Next but I also support other languages

You can experiment with this by editing the SearchEngineList.js file inside PeteSearch, it contains an array of these definitions, and an explanation of the exact format they’re stored in. It’s pretty straightforward to add a new engine that fits into this pattern, and most of them do.

The only way that the semantic web is going to progess beyond proofs of concept is if there’s some concrete, practical and commercial application for it. I’ve seen this with AI, its applications in robotics and games have pushed the field forward much more than pure research. The semantic web is stuck in a chicken-and-egg situation; nobody builds applications because nobody builds sites that are data sources,

because nobody builds applications, etc.

I’m not the only one to notice this, Piggy Bank is an MIT project that’s much more ambitious, and works like Grease Monkey in that it provides a framework to support data capture from many different sites using plugin scripts.

My goal is to demonstrate that a semantic web application can be useful today, in the real world, by creating a compelling tool based on my approach. I’m worried that unless somebody can show something useful, it’s going to succumb to the AI curse and remain the technology of tomorrow indefinitely!