If a user shares your site's content as a photo on Facebook it's incredibly powerful marketing. It means you've produced something compelling enough that users want to show it off to their friends, and it gives you a good chance to entice those friends to try your service. It's pretty hard to figure out how to implement it though, there's a lot of moving parts and no examples that show how to put them all together. Since I just added this as a new feature on Jetpac and it's been a big hit, I thought I'd share how I did it.

Asking for extra permissions

One of the nicest surprises about jumping back into Facebook development after a long hiatus was how savvy users are about permissions. In the old days people tended to click through no matter what was there, but I found our acceptance rate dropped off a cliff when we had 'publish_stream' as default. I'm guessing that's because users have been burned by spammy apps, so I now ask for a lot fewer permissions on the first connect. That meant that the first step after a user clicked on the 'Share' button was to ask for that extra permission.

Actually though, there's a step before that, as suggested by my friend Jeff Widman. He's had a lot of experience optimizing Facebook conversions and he recommended a short dialog explaining why I was going to ask for permissions before sending the users to Facebook's site. That seemed obvious after he said it, so I whipped up a quick explanation:

The next challenge was how to send the user to a new login dialog that requested the publish_stream permission. We're using the OmniAuth gem in Ruby, and with a bit of Googling I found Mike Pack's explanation of how to add an extra setup stage in Ruby on Rails. It was a bit funky, involving returning a 404 code to indicate success for example, but it worked like a charm. Here's the Sinatra version I ended up with:

Rendering the screenshot

It's surprising just how hard it is to take a screenshot of a web page. For very good security reasons it's not something you can do in a general way on the client side. It's still possible to run a signed Java Applet if you can persuade your users to click through scary security dialogs, but there's no other way I know to access the browser's rendering of the DOM. You can write your own HTML renderer into something like Canvas, or use Flash's renderer, but those have very patchy results.

That meant I had to investigate server-side rendering. In the past I'd experimented with using Firefox as a headless browser, but I was intrigued by what I'd seen of tools like PhantomJS that use QT's built-in support to render using webkit. This turned out to be a good solution, with a few things to watch out for:

– It's not truly headless. You still need X Windows to run it, but happily xvfb-run is easy to install and does the trick, at the cost of a bit of startup overhead and complexity.

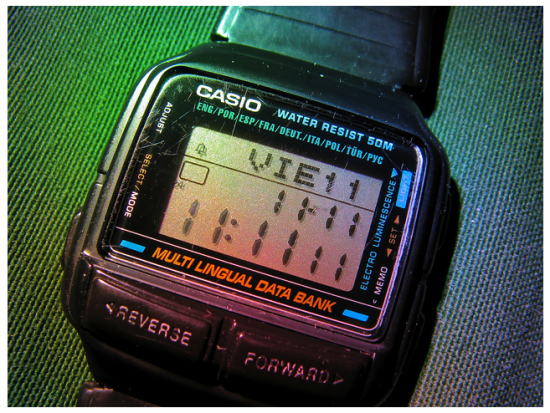

– It takes about 15 seconds to render in our case, which is long enough that we needed some kind of frontend logic to tell the user that we're still working on it.

– If things go wrong, there's no obvious error messages, the output image just isn't there. I didn't debug deeply enough to figure out if there's some stderr that I'm missing, or if it's lost in the bowels of X Windows somewhere, but when there's a problem it makes figuring it out tough.

– The best way I could find to integrate it was through a system call to an external process. In my case the URL has no user input, but if it did you'd need to validate everything that goes into the command string to avoid nasty security issues. It also meant lots of kludgy bouncing of data back-and-forth between the script and the file system.

– You'll have to think about how to authenticate the call, since the server-side page request won't automatically inherit the user's normal cookies. In my case I ended up packaging the authentication information so I could appear to be the user as far as my frontend server was concerned.

– You'll see scroll-bars baked into the result unless the image is the same size as the rendered page. In my case we have CSS that always causes this problem no matter what size you specify! I'll be putting in a fix to special-case the styling as a workaround tomorrow, but there doesn't seem to be a general way to solve this.

Here's the function I wrote to convert a URL into an image:

Uploading the screenshot to Facebook

We're almost there! The final hurdle was figuring out how to use Facebook's API to actually upload the image to the user's profile. I was excited when I ran across an official blog post describing how to do this, but unfortunately it only shows how to craft a form a user can use to upload a file from their own machine. I needed something that would emulate a multipart form post from Ruby, and happily I discovered Cody Brimhall's module that did exactly that. I had to modify it a little since it still expected to pull the data from a file on the server, instead of an in-memory object, but that was easy enough. Here's the modified module:

And here's a snippet of code that shows how to call the Graph API with the right form data:

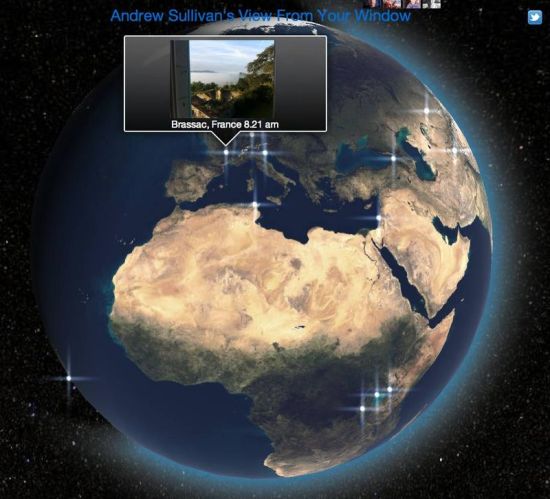

Before I call this, I give the user a preview of the image and the chance to write a custom caption for it:

I was briefly tempted to give people an option to tag friends who are mentioned in the profile but that felt too spammy, and it turns out it's prohibited by Facebook anyway. In the future we might make it easy for users to 'Send' the photo, but it's a very delicate line to tread. We've seen decent uptake from it just appearing in the stream, so we're happy with that for now.

There you have it, the three steps towards screenshot sharing nirvana! If you've got something remarkable enough that users want to share it with their friends, now you can make it easy for them and drive your own growth at the same time.