Photo by Pierre J.

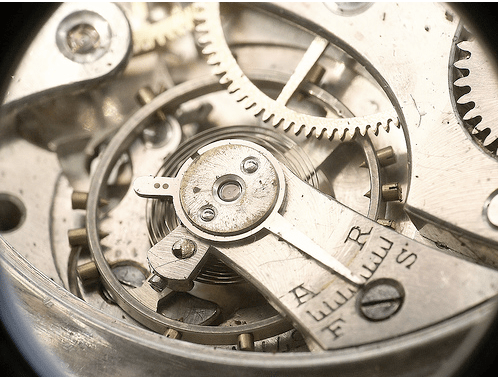

I knew I wanted to build a map of how people were connected in the Boulder tech scene. The first step was accessing the raw data, in this case all the Twitter messages from the first 60 local people I'd identified. I already had a system set up to rapidly analyze large numbers of email messages for my Mailana startup. It's modular, with different import components that access mail APIs like Exchange's MAPI/RPC, Gmail's IMAP and Outlook's Object Model, all outputting a stream of messages in standard XML form. Using Twitter's API it was pretty easy to build an importer. The only wrinkle was that I had to search for @someone in the message body, and add that to the recipients field in the XML.

That whirred away for a while pulling in the complete message histories into my database, with indices created keyed on the recipients, as well as lots of other values. Sitting on top of that database I've got a Facebook App-style REST API that let me run queries like "Tell me who sent messages to who within this group of people". Running that on the Twitter messages gave me a list that conceptually looked like this:

Alice to Bob : 10 messages sent, 3 messages received

Alice to Charles: 4 messages sent, 7 received

…

What I actually wanted was a single number for any relationship, a measure of how strongly Alice and Bob are connected. My choice was the lower of the sent or received counts, so in the above case

Alice to Bob: Strength 3

Alice to Charles: Strength 4

…

I like this method for mail because it excludes bots like Facebook notification addresses that you never reply to, and penalises other sort of unequal relationships, eg ignoring famous people you might have emailed who ignore you. Not that that ever happens to me of course.

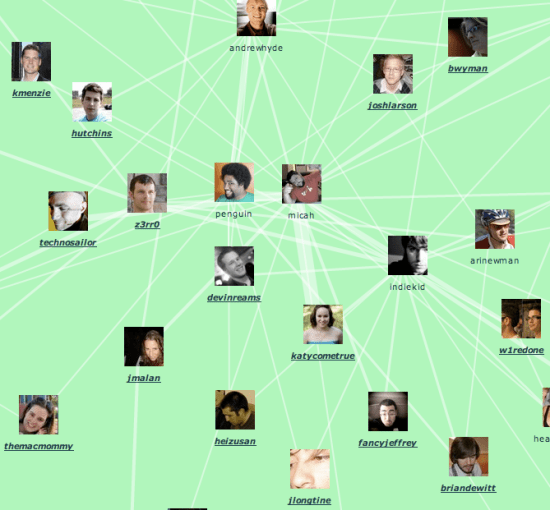

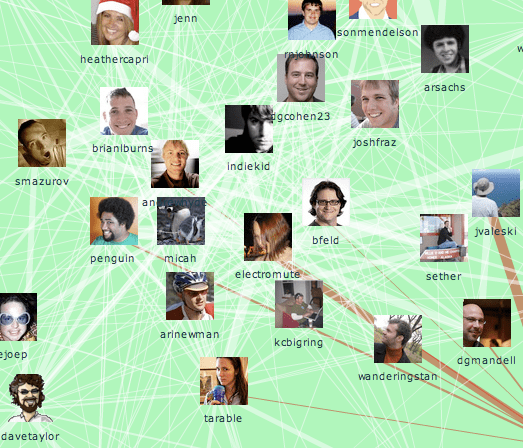

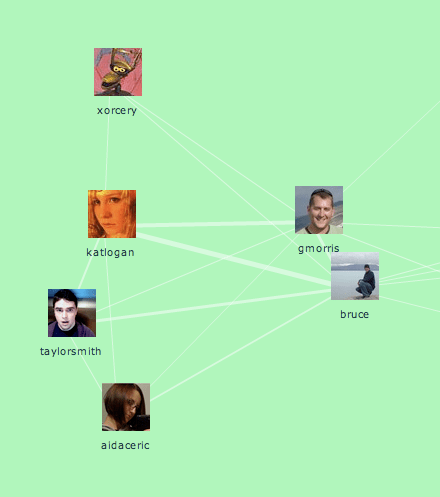

So now I had a list of all the relationships in the community, I needed to display them. I wanted something that could be interacted with inside the browser, so I built a Flash component. I'd never written any Actionscript before, but Mark Shepherd's Springgraph example was a great starting point. After a few days of wrestling with the wonders of flex I had something working.

I then wrote a PHP script that accessed the Mailana API to produce the link information, and the output it in an XML form my component could read in. I based it on the format Daniel Mclaren used for his handy Constellation Roamer plugin, since I'd used that before.

For the Boulder Twits site I didn't want to re-run the query every time to generate the XML. Though it only takes a fraction of a second to create, the system's still pre-alpha so I didn't want a production site depending on it. Instead I saved off several versions and pointed the component directly at the cached XML files. I also didn't want to require every viewer to rerun the force-directed layout, so I let each version arrange itself on my machine, saved the positions and paused the simulation by default. If you want to see the simulation running, try clicking the small play icon in the top left and drag a few people around to see the graph compensate.

I had a lot of fun putting this together. To be honest I was looking for a nice cozy code-womb to crawl into for a couple of weeks after draining my extrovert batteries through Defrag and lots of followup travel and meetings. This was just the ticket, now I'm recharged and looking forward to meeting all the people I've discovered through compiling the list!