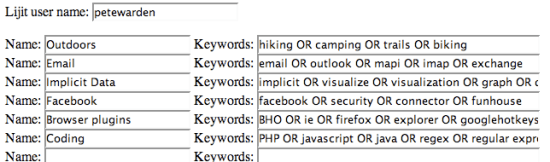

I really like Lijit’s blog search widget, but I don’t want a cloud generated from the most popular searches. I’ve seen other blogs end up with some very inappropriate word combinations, apparently from people gaming the system. I also find the standard notion of tags very limiting; it’s only when I step back and see what I’ve been posting about that natural categories emerge. When I’m writing a post I often have no idea if it’s the first in a long series, or a one-off. I’d much rather have an automatic way of tagging all my posts, based on a few categories I describe after the fact.

If you look on my right bar, you’ll see a new ‘Categories’ list. These are actually canned Lijit searches, so clicking on them will bring up an in-context list of all the posts that match. For each category I’ve defined a Google search, often using the upper-case OR operator to pick a variety of different terms that are present in those types of posts. For example, the ‘Outdoors’ category searches for ‘hiking OR camping OR trails OR biking’.

I’ve mentioned this to the very nice people at Lijit as a feature request for a more general widget, but for now I’ve included a simple tool below to generate your own category lists. It generates the raw HTML, and you’ll need to work out how to get it into your own blog. It also calls back into Lijit’s scripts to bring up the in-context results, so you’ll need to have the main widget already installed.

Here’s what it takes to get this into Typepad:

- Generate the HTML for your list using the form below. Copy the HTML that appears in the textbox when you hit the button onto the pasteboard.

- Go to the TypeLists tab on your Typepad blog administration page.

- Click on the Create New List link.

- Set the type of the new list to Notes and the name to ‘Categories’

- Click on Add Item, and paste the HTML from the generator into the label textbox.

- Go to the Publish tab and select the blog you want to add it to, and click Save Changes.

- Go to Weblogs, then Design, and choose Select Content.

- Disable the built-in categories module if you have it already selected, and click Save Changes.

- Go to Content Ordering and drag the new ‘Categories’ list to where you want it, and save.

Now if you refresh your site, you should see the new categories appear.

function get_object(id)

{

return document.getElementById(id);

}

function get_value(id)

{

var currentobject = get_object(id);

if (currentobject!=null)

return currentobject.value;

else

return “”;

}

function generate_widget()

{

var widgethtml = “

var username = get_value(“username”);

var count;

for (count=0; count<12; count+=1)

{

var nameid = "name"+count;

var keywordsid = "keywords"+count;

var namevalue = get_value(nameid);

var keywordsvalue = get_value(keywordsid);

if ((namevalue!="") && (keywordsvalue!=""))

{

var keywordsescaped = keywordsvalue.replace(/ /g,"+");

var currenttag = "“;

currenttag += namevalue;

currenttag += “

“;

widgethtml += currenttag;

}

}

widgethtml += “

“;

var textpreview = get_object(“textpreview”);

var htmlpreview = get_object(“htmlpreview”);

textpreview.value = widgethtml;

htmlpreview.innerHTML = widgethtml;

}

Lijit user name:

Name:

Search:

Name:

Search:

Name:

Search:

Name:

Search:

Name:

Search:

Name:

Search:

Name:

Search:

Name:

Search:

Name:

Search:

Name:

Search:

Name:

Search:

Name:

Search:

Generated HTML:

Preview:

You can also open this in a separate page in case Typepad’s cleanup breaks the tool, and here’s a screenshot from my category creation: