I've just launched PageRankGraph.com – so what on earth is it?

A few days ago I sat down with the team at Blekko, and as we were geeking out together I realized they'd done something quite revolutionary with their search engine. They'd made the information they use to calculate their rankings public. This may sound arcane, but for the last decade SEO experts have desperately tried to figure out what affects their position in search results, using almost no hard data. Blekko reveals a lot of the raw information that goes into these PageRank-like calculations, especially what sites are linking to any domain, how often and what the rank of each of those sites is. While the source data and algorithm they're using won't be identical to Google and the other search engines, it's close enough to at least draw some rough conclusions.

They provide their own view of this information if you pass in the /seo tag when you search, but I wanted something that told me more about where my Google Juice was coming from. For example, they list Twitter in top place for my site, but I doubt it's helping my ranking very much, since Twitter's high rank will be heavily diluted by how many links it's shared between. Blekko don't offer an API yet, but they did seem relaxed about well-behaved scrapers, so I was able to pull down the HTML of their results pages and extract the information I need. The basic ranking formula is something like

myRank = ((siteARank*#linksFromAToMe)/#linksFromAToEveryone)+((siteBRank*…

I want to know who's making the biggest difference to my site, and Blekko gives me the siteARank and #linksFromAToMe variables I need. The only one that's missing is #linksFromAToEveryone, but they do show the total number of pages on each domain which works as a rough approximation of the number of links there. Pulling in that information for the top 25 sites they list then gives me enough data to calculate which of those are making the greatest contribution.

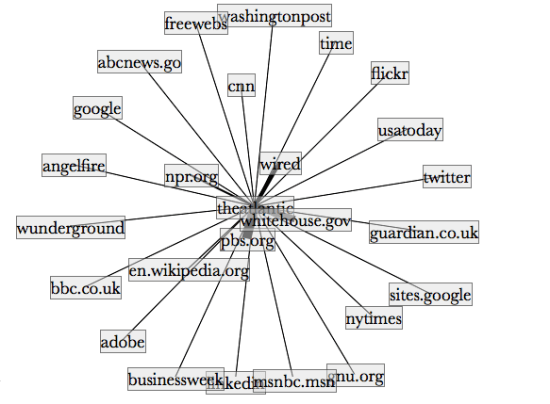

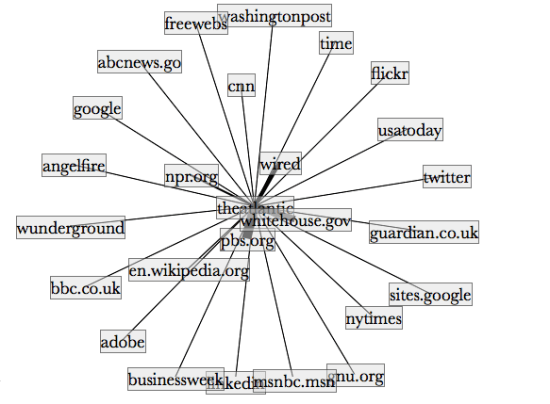

Just go to PageRankGraph.com, enter the URL of the site you're interested in, wait a few seconds and you'll see a network graph showing the web of links that are contributing to that site's prominence in search results.

So, why is this interesting? Take a look at the graph for the Atlantic website above. The Whitehouse website looks like it's making a significant contribution to the magazine's ranking in the search results. I'm actually a long-time subscriber, but there's something a little odd about a news outlet's prominence on the internet being affected by the government. FoxNews gets much less of a boost, though intriguingly the EPA shows up as a contributor. Should all government sites use rel="nofollow" for external URLs? Don't grab your pitchforks and march to Washington just yet, this data is only suggestive and Google may be doing things differently to Blekko in this case, but it's the sort of question that we couldn't get any good data on before now.

Just think of the recent New York Times article on DecorMyEyes.com – if they'd been able to research where the links were actually coming from, they could have built a much more detailed picture of what was actually happening to drive the site up the rankings.

The code itself is fully open-source on github, including a JQuery HTML5/Canvas network graph rendering plugin that I had fun hacking together. Let me know if you come up with any interesting uses for it, and I'd love ideas on how to improve the quality of the information.

[Update – it looks like there's some major differences between the way Blekko handles nofollow links and Google, etc. This will mean larger differences in the results than I'd hoped for, so take the numbers with an even bigger pinch of salt.]