Over the last few months, I've been doing a lot more work with name analysis, and I've made some of the tools I use available as open-source software. Name analysis takes a list of names, and outputs guesses for the gender, age, and ethnicity of each person. This makes it incredibly useful for answering questions about the demographics of people in public data sets. Fundamentally though, the outputs are still guesses, and end-users need to understand how reliable the results are, so I want to talk about the strengths and weaknesses of this approach.

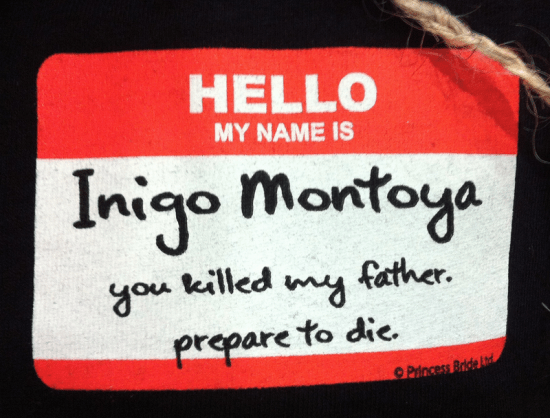

The short answer is that it can never work any better than a human looking at somebody else's name and guessing their age, gender, and race. If you saw Mildred Hermann on a list of names, I bet you'd picture an older white woman, whereas Juan Hernandez brings to mind an Hispanic man, with no obvious age. It should be obvious that this is not always reliable for individuals (I bet there are some young Mildreds out there) but as the sample size grows, the errors tend to cancel each other out.

The algorithms themselves work by looking at data that's been released by the US Census and the Social Security agency. These data sets list the popularity of 90,000 first names by gender and year of birth, and 150,000 family names by ethnicity. I then use these frequencies as the basis for all of the estimates. Crucially, all the guesses depend on how strong a correlation there is between a particular name and a person's characteristics, which varies for each property. I'll give some estimates of how strong these relationships are below, and I link to some papers with more rigorous quantitative evaluations below.

If you are going to use this approach in your own work, the first thing to watch out for is that any correlations are only relevant for people in the US. Names may be associated with very different traits in other countries, and our racial categories especially are social constructs and so don't map internationally.

Gender is the most reliable signal that we can gleam from names. There are some cross-over first names with a mixture of genders, like Francis, and some that are too unique to have data on, but overall the estimate of how many men and women are present in a list of names has proved highly accurate. It helps that there are some regular patterns to augment the sampled data, like names ending with an 'a' being associated with women.

Asian and Hispanic family names tend to be fairly unique to those communities, so an occurrence is a strong signal that the person is a member of that ethnicity. There are some confounding factors though, especially with Spanish-derived names in the Phillipines. There are certain names, especially those from Germany and Nordic countries, that strongly indicate that the owner is of European descent, but many surnames are multi-racial. There are some associations between African-Americans and certain names like Jackson or Smalls, but these are also shared by a lot of people from other ethnic groups. These ambiguities make non-Hispanic and non-Asian measures more indicators than strong metrics, and they won't tell you much until you get into the high hundreds for your sample size.

Age has the weakest correlation with names. There are actually some strong patterns by time of birth, with certain names widely recognized as old-fashioned or trendy, but those tend to be swamped by class and ethnicity-based differences in the popularity of names. I do calculate the most popular year for every name I know about, and compensate for life expectancy using actuarial tables, but it's hard to use that to derive a likely age for a population of people unless they're equally distributed geographically and socially. There tends to be a trickle-down effect where names first become popular amongst higher-income parents, and then spread throughout society over time. That means if have a group of higher-class people, their first names will have become most widely popular decades after they were born, and so they'll tend to appear a lot younger than they actually are. Similar problems exist with different ethnic groups, so overall treat the calculated age with a lot of caution, even with large sample sizes.

You should treat the results of name analysis cautiously – as provisional evidence, not as definitive proof. It's powerful because it helps in cases where no other information is available, but because those cases are often highly-charged and controversial, I'd urge everyone to see it as the start of the process of investigation not the end.

I've relied heavily on the existing academic work for my analysis, so I highly recommend checking out some of these papers if you do want to work with this technique. As an engineer, I'm also working without the benefit of peer review, so suggestions on improvements or corrections would be very welcome at pete@petewarden.com.

Use of Geocoding and Surname Analysis to Estimate Race and Ethnicity – A very readable survey of the use of surname analysis for ethnicity estimation in health statistics.

Estimating Age, Gender, and Identity using First Name Priors – A neat combination of image-processing techniques and first name data to improve the estimates of people's ages and genders in snapshots.

Are Emily and Greg More Employable than Lakisha and Jamal? – Worrying proof that humans rely on innate name analysis to discriminate against minorities.

First names and crime: Does unpopularity spell trouble? – An analysis that shows uncommon names are associated with lower-class parents, and so correlate juvenile delinquency and other ills connected to low socioeconomic status.

Surnames and a theory of social mobility – A recent classic of a paper that uses uncommon surnames to track the effects of social mobility across many generations, in many different societies and time periods.

OnoMap – A project by University College London to correlate surnames worldwide with ethnicities. Commercially-licensed, but it looks like you may be able to get good terms for academic usage.

Text2People – My open-source implementation of name analysis.