Pearls by Matuko Amini

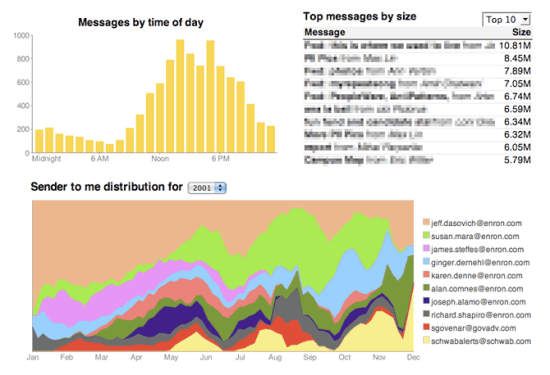

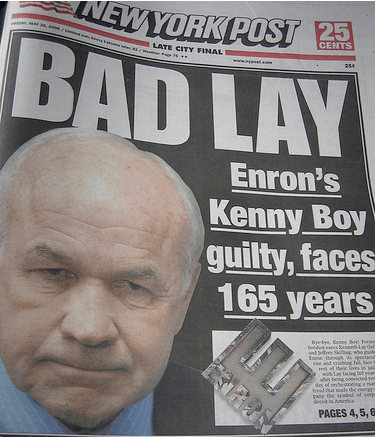

I need to load my Exchange server with a large set of real emails, so I can simulate how my tools will work on a big organization’s mail. The best data set out there is the Enron collection, but since most researchers are doing static analysis, it’s only available in easy-to-process forms like a mysql database or as individual files. There’s no obvious way to turn them back into something that can be imported into Outlook or Exchange.

I needed a way to get it into a form that standard mail programs would recognize. The easiest format to convert individual files to is mbox. In this setup, a set of email messages is stored in a single file ending with .mbox. Within each file, messages are seperated by a "From line". This consists of the characters ‘F’, ‘r’, ‘o’, ‘m’, ‘ ‘, followed by an email address and a date in asctime format. Each of these from lines must be preceded by a blank line. To make sure there’s no confusion with message content, any line beginning "From " in the body of a message must be changed to ">From ".

Since this all involves heavy text processing, I turned to Perl. Here’s a copy of my mailconvert.pl script, and I’ve included it inline at the bottom. It will take a directory hierarchy of individual email files, and for each folder will create a mailbox.mbox that contains all of the messages in that folder. It recognises emails by the inclusion of a "From: " header, and uses that address and the date header to create a complete from line seperator. Run it with the current working directory set to the root of the hierarchy. For example, cd to inside the maildir if you’re trying to convert the files extracted from the Enron tar.

I’ve tested with Apple Mail, and I’m able to import the files this generates. It’s a bit eerie seeing all the Enron mails show up in my inbox, and it’s a good reminder that these are messages that the senders never intended to be public. If you do use these mails yourself, please be respectful of their privacy.

Once you’re in mbox there’s a lot of tools available to convert them to Microsoft-friendly formats like psts. I’ll be covering those in a future article, along with some enhancements like grabbing the attachments and keeping the folder structure from the originals.

#!/usr/bin/perl

use strict;

use warnings;

use Cwd;

use POSIX;

use File::Find;

use Date::Parse;

# You need a date in the from line, though it seems redundant with the headers.

# Without a date there, Apple Mail at least won't parse the mbox files, so pick

# an arbitrary value to put in there if we don't find a header.

my $datedefault = "Tue, 18 Mar 2008 12:11:51";

# The name of the mbox file created from all the messages in the directory

my $outputfilename = "mailbox.mbox";

# Empty the file

open(OUTPUT, "> $outputfilename");

close(OUTPUT);

my $count = 0;

find(\&findcallback, cwd);

# This is called back for every file found, and appends the contents to the

# main mbox file for that directory, together with a from line of the format

# "From <email address> <asctime format date" and a blank line.

sub findcallback

{

my $file = $File::Find::name;

# If it's not a file then don't do anything

return unless -f $file;

# Avoid processing the output file

if ($file eq $outputfilename)

{

return;

}

open F, $file or print "couldn't open $file\n" && return;

my $text = "";

my $from = "";

my $date = "";

while (<F>)

{

my $line = $_;

# If this line is a From: header, and we haven't found one before, then

# grab the address to use in the "From " seperator between mail messages

if( ($from eq "") and ($line =~ /^From: .*$/) )

{

$from = $line;

$from =~ s/^From: /From /;

# remove the new line

$from =~ s/[\r\n]//g;

}

elsif ($line =~ /^From .*$/)

{

# If there's a line that looks like a "From " seperator, add a > to

# prevent it messing up the mbox parsing

$line = ">" . $line;

}

# If this is a Date: header, then grab the value to use after the address

if( ($date eq "") and ($line =~ /^Date: .*$/) )

{

my $inputdate = $line;

$inputdate =~ s/^Date: //g;

my $datevalue = str2time($inputdate);

$date = POSIX::gmtime($datevalue);

}

$text .= $line;

}

close F;

# If no date header was found, pick an arbitrary one with the correct format

if ($date eq "")

{

$date = $datedefault;

}

# Work out the final string if this looks like a valid mail file

if ((length($text)>0) and (length($from)>0))

{

my $outputstring .= $from . " " . $date . "\n" . $text . "\r\n\r\n";

open(OUTPUT, ">> $outputfilename");

print OUTPUT $outputstring;

close(OUTPUT);

}

}