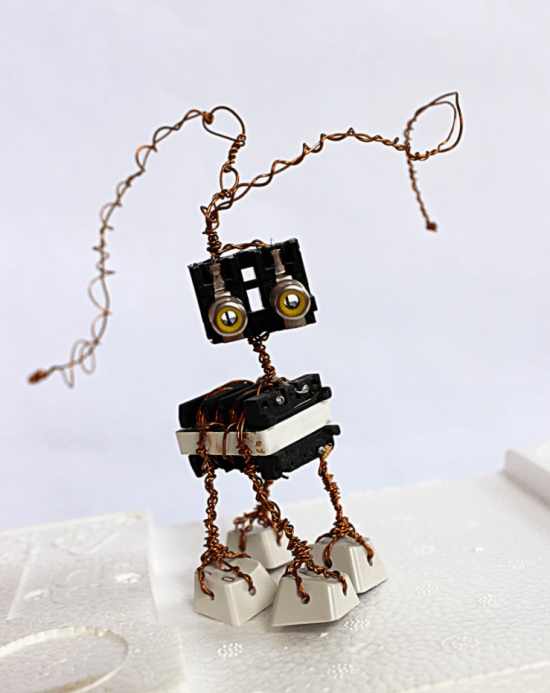

I miss having a dog, and I’d love to have a robot substitute! My friend Lukas built a $100 Raspberry Pi robot using TensorFlow to wander the house and recognize objects, and with the person detection model it can even follow me around. I want to be able to talk to my robot though, and at least have it understand simple words. To do that, I need to write a simple speech recognition example for TensorFlow.

As I looked into it, one of the biggest barriers was the lack of suitable open data sets. I need something with thousands of labelled utterances of a small set of words, from a lot of different speakers. TIDIGITS is a pretty good start, but it’s a bit small, a bit too clean, and more importantly you have to pay to download it, so it’s not great for an open source tutorial. I like https://github.com/Jakobovski/free-spoken-digit-dataset, but it’s still small and only includes digits. LibriSpeech is large enough, but isn’t broken down into individual words, just sentences.

To solve this, I need your help! I’ve put together a website at https://open-speech-commands.appspot.com/ (now at https://aiyprojects.withgoogle.com/open_speech_recording) that asks you to speak about 100 words into the microphone, records the results, and then lets you submit the clips. I’m then hoping to release an open source data set out of these contributions, along with a TensorFlow example of a simple spoken word recognizer. The website itself is a little Flask app running on GCE, and the source code is up on github. I know it doesn’t work on iOS unfortunately, but it should work on Android devices, and any desktop machine with a microphone.

I’m hoping to get as large a variety of accents and devices as possible, since that will help the recognizer work for as many people as possible, so please do take five minutes to record your contributions if you get a chance, and share with anyone else who might be able to help!

hi there,

nice project. I’d be glad to supply a couple of data sets. Pls. notify me when your site is ready.

BR,

~A

Hey,

Have you test the follow person on a RPi?

Pingback: A quick hack to align single-word audio recordings « Pete Warden's blog

I will contribute with my Romanian accent. Great initiative

Hi Pete,

I landed here from your “quick hack audio alignment” blog and then I realized you are wanting to implement some of the same things I want to using Pi’s and AI. I actually don’t care if it’s on a Pi but want the same end result. being able to not just get “Ok Google” results, but have a more intelligent conversation with a custom AI assistant. Not really a assistant, but a “companion”. I thinkyou get where I am going.

I don’t have an exact solution for you but thought I would share my experience. I am also down to the “human voice audio detection” problem. I have experimented with Google Voice To Text API and NAudio to wrap my mind around needs to happen. I am using C# on Windows, but am looking at how to do the same thing in Python environment. I am not interested in Piping between processes. I want something lower level and in the same process if possible (memory sharing). I am hooking up to NAudios Peek Audio detector event so I get notified when something comes over the microphone, I then naively start a recording for a few ms then take this audio snippet and shoot it off to Google voice API. The C# sharp app actually sort of works, and of course there is a delay going out to Google and coming back, but it got me to thinking more about pre-processing the human voice audio up front before even trying to send it off to a Tensor Flow model. Even building a voice filter/amp with arduino and Pure Data. FFT are wau over my head, but also thought about feeding that into TF, but I am still getting up to speed with and that’s just another huge layer to get into my head.

Hi pete!

Thanks, it just works on Google cloud (appspot). One problem encountered, how I obtain the “CLOUD_STORAGE_BUCKET” and “SESSION_SECRET_KEY” ? I got error when uploading sound data, although I already change to my bucket and key. I am not sure about it. I am beginner on python and gcloud.