I’m pleased to say that we’ve been able to release a first version of TensorFlow’s quantized eight bit support. I was pushing hard to get it in before the Embedded Vision Summit, because it’s especially important for low-power and mobile devices, so it’s exciting to get it out there. All this documentation will be appearing on the main TensorFlow site also, but since I’ve talked so much about why eight-bit is important here, I wanted to give an overview of what we’ve released in this post too.

When modern neural networks were being developed, the biggest challenge was getting them to work at all! That meant that accuracy and speed during training were the top priorities. Using floating point arithmetic was the easiest way to preserve accuracy, and GPUs were well-equipped to accelerate those calculations, so it’s natural that not much attention was paid to other numerical formats.

These days, we actually have a lot of models being being deployed in commercial applications. The computation demands of training grow with the number of researchers, but the cycles needed for inference expand in proportion to users. That means pure inference efficiency has become a burning issue for a lot of teams.

That is where quantization comes in. It’s an umbrella term that covers a lot of different techniques to store numbers and perform calculations on them in more compact formats than 32-bit floating point. I am going to focus on eight-bit fixed point, for reasons I’ll go into more detail on later.

Why does Quantization Work?

Training neural networks is done by applying many tiny nudges to the weights, and these small increments typically need floating point precision to work (though there are research efforts to use quantized representations here too).

Taking a pre-trained model and running inference is very different. One of the magical qualities of deep networks is that they tend to cope very well with high levels of noise in their inputs. If you think about recognizing an object in a photo you’ve just taken, the network has to ignore all the CCD noise, lighting changes, and other non-essential differences between it and the training examples it’s seen before, and focus on the important similarities instead. This ability means that they seem to treat low-precision calculations as just another source of noise, and still produce accurate results even with numerical formats that hold less information.

Why Quantize?

Neural network models can take up a lot of space on disk, with the original AlexNet being over 200 MB in float format for example. Almost all of that size is taken up with the weights for the neural connections, since there are often many millions of these in a single model. Because they’re all slightly different floating point numbers, simple compression formats like zip don’t compress them well. They are arranged in large layers though, and within each layer the weights tend to be normally distributed within a certain range, for example -3.0 to 6.0.

The simplest motivation for quantization is to shrink file sizes by storing the min and max for each layer, and then compressing each float value to an eight-bit integer representing the closest real number in a linear set of 256 within the range. For example with the -3.0 to 6.0 range, a 0 byte would represent -3.0, a 255 would stand for 6.0, and 128 would represent about 1.5. I’ll go into the exact calculations later, since there’s some subtleties, but this means you can get the benefit of a file on disk that’s shrunk by 75%, and then convert back to float after loading so that your existing floating-point code can work without any changes.

Another reason to quantize is to reduce the computational resources you need to do the inference calculations, by running them entirely with eight-bit inputs and outputs. This is a lot more difficult since it requires changes everywhere you do calculations, but offers a lot of potential rewards. Fetching eight-bit values only requires 25% of the memory bandwidth of floats, so you’ll make much better use of caches and avoid bottlenecking on RAM access. You can also typically use SIMD operations that do many more operations per clock cycle. In some case you’ll have a DSP chip available that can accelerate eight-bit calculations too, which can offer a lot of advantages.

Moving calculations over to eight bit will help you run your models faster, and use less power (which is especially important on mobile devices). It also opens the door to a lot of embedded systems that can’t run floating point code efficiently, so it can enable a lot of applications in the IoT world.

Why Not Train in Lower Precision Directly?

There have been some experiments training at lower bit depths, but the results seem to indicate that you need higher than eight bit to handle the back propagation and gradients. That makes implementing the training more complicated, and so starting with inference made sense. We also already have a lot of float models already that we use and know well, so being able to convert them directly is very convenient.

How Can You Quantize Your Models?

TensorFlow has production-grade support for eight-bit calculations built it. It also has a process for converting many models trained in floating-point over to equivalent graphs using quantized calculations for inference. For example, here’s how you can translate the latest GoogLeNet model into a version that uses eight-bit computations:

curl http://download.tensorflow.org/models/image/imagenet/inception-2015-12-05.tgz -o /tmp/inceptionv3.tgz tar xzf /tmp/inceptionv3.tgz -C /tmp/ bazel build tensorflow/contrib/quantization/tools:quantize_graph bazel-bin/tensorflow/contrib/quantization/tools/quantize_graph \ --input=/tmp/classify_image_graph_def.pb \ --output_node_names="softmax" --output=/tmp/quantized_graph.pb \ --mode=eightbit

This will produce a new model that runs the same operations as the original, but with eight bit calculations internally, and all weights quantized as well. If you look at the file size, you’ll see it’s about a quarter of the original (23MB versus 91MB). You can still run this model using exactly the same inputs and outputs though, and you should get equivalent results. Here’s an example:

bazel build tensorflow/examples/label_image:label_image bazel-bin/tensorflow/examples/label_image/label_image \ --input_graph=/tmp/quantized_graph.pb \ --input_width=299 \ --input_height=299 \ --mean_value=128 \ --std_value=128 \ --input_layer_name="Mul:0" \ --output_layer_name="softmax:0"

You’ll see that this runs the newly-quantized graph, and outputs a very similar answer to the original.

You can run the same process on your own models saved out as GraphDefs, with the input and output names adapted to those your network requires. I recommend that you run them through the freeze_graph script first, to convert checkpoints into constants stored in the file.

How Does the Quantization Process Work?

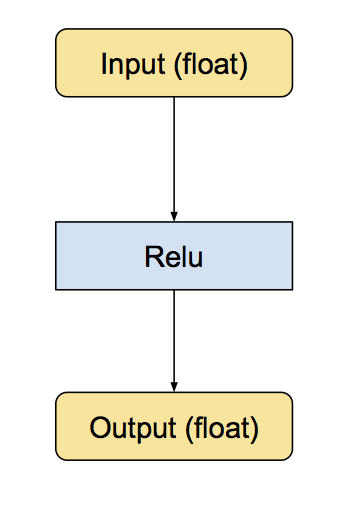

We’ve implemented quantization by writing equivalent eight-bit versions of operations that are commonly used during inference. These include convolution, matrix multiplication, activation functions, pooling operations and concatenation. The conversion script first replaces all the individual ops it knows about with quantized equivalents. These are small sub-graphs that have conversion functions before and after to move the data between float and eight-bit. Below is an example of what they look like. First here’s the original Relu operation, with float inputs and outputs:

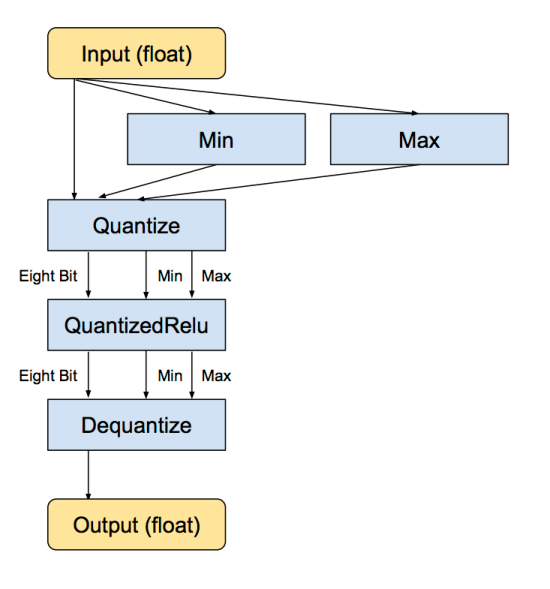

Then, this is the equivalent converted subgraph, still with float inputs and outputs, but with internal conversions so the calculations are done in eight bit.

The min and max operations actually look at the values in the input float tensor, and then feeds them into the Dequantize operation that converts the tensor into eight-bits. There’s more details on how the quantized representation works later on.

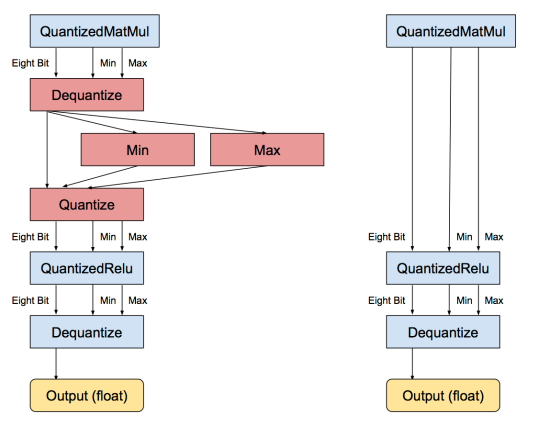

Once the individual operations have been converted, the next stage is to remove unnecessary conversions to and from float. If there are consecutive sequences of operations that all have float equivalents, then there will be a lot of adjacent Dequantize/Quantize ops. This stage spots that pattern, recognizes that they cancel each other out, and removes them, like this:

Applied on a large scale to models where all of the operations have quantized equivalents, this gives a graph where all of the tensor calculations are done in eight bit, without having to convert to float.

What Representation is Used for Quantized Tensors?

We approach converting floating-point arrays of numbers into eight-bit representations as a compression problem. We know that the weights and activation tensors in trained neural network models tend to have values that are distributed across comparatively small ranges (for example you might have -15 to +15 for weights, -500 to 1000 for activations on an image model, though the exact numbers will vary). We also know from experiment that neural nets tend to be very robust in the face of noise, and so the noise-like error produced by quantizing down to a small set of values will not hurt the precision of the overall results very much. We also want to pick a representation that’s easy to perform calculations on, especially the large matrix multiplications that form the bulk of the work that’s needed to run a model.

These led us to pick a representation that has two floats to store the overall minimum and maximum values that are represented by the lowest and highest quantized value. Each entry in the quantized array represents a float value in that range, distributed linearly between the minimum and maximum. For example, if we have minimum = -10.0, and maximum = 30.0f, and an eight-bit array, here’s what the quantized values represent:

Quantized | Float ----------+----- 0 | -10.0 255 | 30.0 128 | 10.0

The advantages of this format are that it can represent arbitrary magnitudes of ranges, they don’t have to be symmetrical, it can represent signed and unsigned values, and the linear spread makes doing multiplications straightforward. There are alternatives like Song Han’s code books that can use lower bit depths by non-linearly distributing the float values across the representation, but these tend to be more expensive to calculate on.

The advantage of having a strong and clear definition of the quantized format is that it’s always possible to convert back and forth from float for operations that aren’t quantization-ready, or to inspect the tensors for debugging purposes. One implementation detail in TensorFlow that we’re hoping to improve in the future is that the minimum and maximum float values need to be passed as separate tensors to the one holding the quantized values, so graphs can get a bit dense!

How do we Determine Ranges?

The nice thing about the minimum and maximum ranges is that they can often be pre-calculated. Weight parameters are constants known at load time, so their ranges can also be stored as constants. We often know the ranges for inputs (for examples images are usually RGB values in the range 0.0 to 255.0), and many activation functions have known ranges too. This can avoid having to analyze the outputs of an operation to determine the range, which we need to do for math ops like convolution or matrix multiplication which produce 32-bit accumulated results from 8-bit inputs.

If you’re doing any kind of arithmetic on 8-bit inputs, you’ll naturally start to accumulate results that have more than 8 bits of precision. If you add two 8 bit values, the result needs 9 bits. If you multiply two 8 bit numbers, you get 16 bits in the output. If you total up a series of 8-bit multiplications, like we do for matrix multiplication, the results grow beyond 16 bits, with the accumulator typically needing at least 20 to 25 bits, depending on how long the dot products involved are.

This can be an issue for our quantization approach, since we need to take an output that’s much wider than 8 bits and shrink it down to feed into the next operation. One way to do it for matrix multiplies would be to calculate the largest and smallest possible output values, assuming all of the input values were at extremes. This is safe, since we know mathematically that no results can fall outside this range, but in practice most weights and activation values are much more evenly distributed. This means that the actual range of values we see is much smaller than the theoretical one, so if we used the larger bounds we’d be wasting a lot of our 8 bits on numbers that never appeared. Instead, we use the QuantizeDownAndShrinkRange operator to take a 32-bit accumulated tensor, analyze it to understand the actual ranges used, and rescale so that the 8-bit output tensor uses that range effectively. There are strategies that involve observing the actual minimums and maximums encountered with large sets of training data, and hard-coding those to avoid analyzing the buffer for ranges every time, but we don’t currently include that optimization.

How is the Rounding Done?

One of the hardest and most subtle problems we hit during quantization was the accumulation of biases. As I mentioned above, neural networks are very resilient to noise, but unless you’re very careful with rounding it’s easy to introduce biases in a single direction that build up during computation and wreck the final accuracy. You can see the final formula in the code, but the important part was that we needed to subtract the rounded version of the minimum from the rounded version of the float input value, rather than subtracting float minimum from the input and then rounding.

What’s Next?

We’ve found that we can get extremely good performance on mobile and embedded devices by using eight-bit arithmetic rather than floating-point. You can see the framework we use to optimize matrix multiplications at gemmlowp. We still need to apply all the lessons we’ve learned to the TensorFlow ops to get maximum performance on mobile, but we’re actively working on that. Right now, this quantized implementation is a reasonably fast and accurate reference implementation that we’re hoping will enable wider support for our eight-bit models on a wider variety of devices.

If you’re interested, I highly recommend digging through the quantization code in TensorFlow, especially looking at the kernels that implement quantized ops. These all include reference implementations that we’re hoping will help portability to new hardware devices.

We also hope that this demonstration will encourage the community to explore what’s possible with low-precision neural networks. Thanks to everyone who helped put the quantization support together, it’s been great getting this out there!

Congratulations to you and the whole TensorFlow team! The continued efforts to make TensorFlow as portable and deployable as possible are astounding. Looking forward to the next blog-worthy update!

SqueezeNet offers a more extreme reduction in model size:

https://github.com/DeepScale/SqueezeNet

SqueezeNet with compression:

https://github.com/songhan/SqueezeNet_compressed

Update: we now have a better website on SqueezeNet with compression:

http://songhan.github.io/SqueezeNet-Deep-Compression/

@FIANDOLA ..I checked mentioned github repo; contains only decoder file .How can i compress my own model ?? if not feasible then suitable approach

This is great work Pete!

Readers maybe also interested in these articles:

Pruning can remove lots of weights before doing quantization without hurting accuracy. Pruning can remove 67% for CONV layers, 90% for FC layers, verified across LeNet, AlexNet, VGGNet (shown in below paper), GoogLeNet, SqueezeNet, NeuralTalk (done recently after the paper) : http://arxiv.org/pdf/1506.02626v3.pdf

Combining with ‘Deep Compression’ even more compression ratio could be achieved by using mixed precision:

http://arxiv.org/pdf/1510.00149.pdf (new results release in ICLR’16: GoogLeNet could be compressed by 10x; SqueezeNet could be compressed by 10x.)

Using EIE: efficient inference engine more speedup and energy efficiency could be achieved:

http://arxiv.org/abs/1602.01528

A byproduct of network pruning and ‘Deep Compression’ is that DSD (dense-sparse-dense) training can help improve the prediction accuracy without changing the model architecture:

http://songhan.github.io/SqueezeNet-DSD-Training

Why do you quantize the values in this way instead of fixed point quantization? Then you don’t need to find and Min and Max value every time.

Why do you quantize the parameter in this way instead of fixed point quantization? Then, you don’t need to find the Mina and Max value.

This is great work. I tried and could able to reduce the size to 23 MB. But am getting an error Not found: Op type not registered ‘Dequantize’, when I’m trying to classify image. Please help.

Sorry you’re hitting problems, I should have included more information on loading the ops.

Which script are you using to classify the image? If it’s the python one, then you’ll need to include the python quantized ops in the BUILD file, like I do here for quantize_graph:

https://github.com/tensorflow/tensorflow/blob/master/tensorflow/contrib/quantization/tools/BUILD#L29

Then you’ll need to load the ops explicitly in the script, like this:

https://github.com/tensorflow/tensorflow/blob/master/tensorflow/contrib/quantization/tools/quantize_graph.py#L289

If you’re using the C++ label_image example, it’s easier because you should just be able to add tensorflow/contrib/quantization:cc_ops and tensorflow/contrib/quantization/kernels:quantized_ops as dependencies to your cc_binary in the BUILD file.

Hello,

Based on your instruction, I built the tensorflow/models/image/imagenet/classify_image.py and use it to classify a watermelon image with a 8bit googlenet pb model. The results before and after quantization are problematic:

Before:

watermelon (score = 0.57405)

melon (score = 0.19122)

onion (score = 0.02786)

winter squash (score = 0.02478)

muskmelon, sweet melon (score = 0.01922)

After:

W tensorflow/contrib/quantization/kernels/quantized_conv_ops.cc:201] Zero is not representable in the quantized range used by the input. This means QuantizedConv2d has to fall back to a slow implementation, since the border of zero values can’t be represented easily. You should try to construct graphs that avoid this situation.

souchong, soochong (score = 0.51366)

tea, tea leaf (score = 0.08708)

milk (score = 0.06333)

barley, barleycorn (score = 0.02030)

sesame seed, benniseed (score = 0.01940)

What does that warning mean?

I encounter the same error in IOS examples, how should I do?

I’m having the same issue as F Ferroni on TF release 1.3.0.

../examples/label_image/BUILD:12:1: no such target ‘//tensorflow/contrib/quantization:cc_ops’: target ‘cc_ops’ not declared in package ‘tensorflow/contrib/quantization’ defined by /home/ncl/Documents/mario/tensorflow/tensorflow/contrib/quantization/BUILD and referenced by ‘//tensorflow/examples/label_image:label_image’

..//examples/label_image/BUILD:12:1: no such package ‘tensorflow/contrib/quantization/kernels’: BUILD file not found on package path and referenced by ‘//tensorflow/examples/label_image:label_image’

The problem seems to be that code form ‘tensorflow/contrib/quantization’ has been included in ‘tensorflow/core/kernels/’. I tried modifying the BUILD file of ‘label_image’ to include “//tensorflow/core/kernels:quantized_ops”, and the build operation worked. However I can’t find a substitute for “//tensorflow/contrib/quantization:cc_ops”, not sure what the performance results will be if I simply don’t include this dependency.

Any suggestions?

Follow PETE’s instruction, it works

modify BUILD file in tensorflow/examples/label_image

cc_binary(

name = “label_image”,

srcs = [

“main.cc”,

],

linkopts = [“-lm”],

deps = [

“//tensorflow/cc:cc_ops”,

“//tensorflow/core:framework_internal”,

“//tensorflow/core:tensorflow”,

“//tensorflow/contrib/quantization:cc_ops”,

“//tensorflow/contrib/quantization/kernels:quantized_ops”,

],

)

Hi,

In the current release, if you add those extra dependencies “//tensorflow/contrib/quantization:cc_ops”,

“//tensorflow/contrib/quantization/kernels:quantized_ops”

it will not work.

xxx@gpu-box:~/tensorflow$ bazel build tensorflow/examples/label_image:label_image

ERROR: /home/xxx/tensorflow/tensorflow/examples/label_image/BUILD:10:1: no such target ‘//tensorflow/contrib/quantization:cc_ops’: target ‘cc_ops’ not declared in package ‘tensorflow/contrib/quantization’ defined by /home/xxx/tensorflow/tensorflow/contrib/quantization/BUILD and referenced by ‘//tensorflow/examples/label_image:label_image’.

ERROR: /home/xxx/tensorflow/tensorflow/examples/label_image/BUILD:10:1: no such package ‘tensorflow/contrib/quantization/kernels’: BUILD file not found on package path and referenced by ‘//tensorflow/examples/label_image:label_image’.

ERROR: Analysis of target ‘//tensorflow/examples/label_image:label_image’ failed; build aborted.

INFO: Elapsed time: 0.280s

My regards to you. That worked like a charm. N thanks for such a quick reply. Could able to classify using C++ label_image example on a quantized and smaller model. This is Grreat.

This is some great work Pete!

I am wondering if you also looked into using less than eight-bit representations? Have you benchmarked the performance of some existing networks using this framework yet? Are the results publicly available?

We did some similar work a while back in the Caffe framework, investigating the influence of quantization on output accuracy on a per-layer basis for a number of benchmarks: http://arxiv.org/pdf/1603.06777v1.pdf. It turns out some CNN-layers, even in AlexNet, allow 4-bit representation or less, while losing only about 1% in recognition accuracy for the full test data-set.

Great work! Have you benchemarked the quantized network on GPU?

Pingback: 1 – How to Quantize Neural Networks with TensorFlow

Thanks for that, it worked very good with label_image.

I have a problem when trying to use it with the android example.

When I added the ops to the build file it gives me errors.

Is there a different way to add dependencies when compiling for android?

Thanks, Dan

did your android example work now? I have the same problems..

Pingback: Bit width tweaks point way to practical deep learning - Tech Design Forum Techniques

Which version of Tensorflow has this feature enabled?

Congratulations to this great work! The quantization method saves disk space, but will it also reduce memory cost? In other words, if we load the quantized model into memory, will it cost the same memory as the original model?

Pingback: The Internet of Thinking Things – Intelligence at the Edge | OUseful.Info, the blog...

Thanks a lot for the tool, but it looks like the tutorial requires a small extension.

It looks like dequantize op is not registered by default. When I am trying to run quantized graph I have an error:

tensorflow.python.framework.errors.NotFoundError: Op type not registered ‘Dequantize’

Could you provide a tutorial on how to incorporate the corresponding kernels and ops ?

Also the quantization script contains quite a few mode options, discussion on what every mode is doing and how it can affect everything would really be of great use !

PS: btw, I have problems with ‘Quantize’ op even when I load the corresponding *.so files when I am trying to run graph after ‘quantize’ mode (‘weights’, which, as I understand, only converts const weights saved does not cause problems, and no longer complains about ‘Dequantize’ op). I load ops like:

tf.load_op_library(‘/opt/tensorflow/bazel-bin/tensorflow/contrib/quantization/_quantized_ops.so’)

tf.load_op_library(‘/opt/tensorflow/bazel-bin/tensorflow/contrib/quantization/kernels/_quantized_kernels.so’)

I am not using your load script (which supposed to do the same thing, as I understand, but resolve paths automatically) because I have some linking issues I haven’t figured out yet (maybe such troubleshooting is another point to add to the tutorial)

Error when I am running prediction:

File “/usr/local/lib/python2.7/dist-packages/tensorflow/python/framework/importer.py”, line 247, in import_graph_def

op_def = op_dict[node.op]

KeyError: u’Quantize’

Could anyone help a little bit with proper loading of ops ?

Do you have any analysis on the accuracy impact of 8-bit quantization for deep residual networks (http://arxiv.org/abs/1512.03385)? In my experiments quantizing ResNet-152 with this script, the results have been catastrophic. I didn’t spend much time into root causing the problem but I have seen similar results with the Ristretto dynamic fixed point approach (http://lepsucd.com/?page_id=621)

Hi Nico,

Did you try ternary weights fine-tuning before quantizing to 8-bit?

I believe once you have ternary weights, the 8-bit quantization will produce exactly the same accuracy because they require less bits. Some papers showed that ResNet works quite well with ternary weights : https://arxiv.org/pdf/1605.04711.pdf

Best,

Hicham

Yes, that does look like an interesting approach. I haven’t tried it myself, but the results look promising.

Thanks for your work. It really works with our model. However, our model runs slower on GPU after quantizations. Is there anything wrong with my deploy? (http://stackoverflow.com/questions/38775675/why-my-tensorflow-network-becomes-slower-after-applying-the-quantization-tools-o) Otherwise, would we expect improvement in the near future? Thanks!

When I run the quantized model, I see some warning like this (the model gets results but is 10 times slower than the unquantized one) :

W tensorflow/contrib/quantization/kernels/quantized_conv_ops.cc:201] Zero is not representable in the quantized range used by the input. This means QuantizedConv2d has to fall back to a slow implementation, since the border of zero values can’t be represented easily. You should try to construct graphs that avoid this situation.

what’s wrong with the graph?

Hi Pete, As I apply quantization to my graph.pb i am getting this log of errors

“learning/FaceDetector/tensorflow_utils.mm:152] Could not create TensorFlow Graph: Not found: Op type not registered ‘Dequantize’

F /Users/mohammadzulqurnain/Downloads/tensorflow-master/tensorflow/contrib/ios_examples/cleaned sign recognition deep learning/FaceDetector/ViewController.mm:124] Couldn’t load model: Not found: Op type not registered ‘Dequantize'”

Hello Pete, is expected to get much slower inference for quantized graph ?

in my case the quantized graph is 20x slower than the original one (5ms –> 100ms)

True, I observe the same. But in my case it’s only 5x slower 🙂

I believe it’s due to a larger number of operations: 126 vs 54 in my case.

It seems that we need arithmetical operations over quantized numbers, not a conversion between dynamic fixed point/fixed point.

Probably because the Quantize Dequantize operations on each element extremes

Hi Pete,

Except re-building label_image with new dependencies, one also need to specify correct flags for the tool. From what I can see in the code, they are: –graph, –input_width, –input_height, –input_mean, –input_std, –input_layer, –output_layer.

Pingback: Benchmarking Quantization on Android - PKT

Hi Pete,

Great work!

And I want to learn more about how you determine the minimum and maximum ranges of the output of the maths ops like convolution. Would you please tell me where can I find the related code? (For example, you say that you use the QuantizeDownAndShrinkRange operator to take a 32-bit accumulated tensor, analyze it to understand the actual ranges used, and rescale so that the 8-bit output tensor uses that range effectively. Where can I find this piece of code?.)

If you look for the RequantizationRange op, in tensorflow/core/kernels/requantization_range.cc, you should see where the actual min/max values of the 32-bit range are extracted, ready to be used to convert down to 8-bit. You can skip this step if you figure out the range by running a lot of samples through the network and observing what the overall mins/maxes are, and replace it with constants with those values.

Hi Pete,

Optimizing GEMM for 8-bits is a very interesting approach. However, does the approach support efficiently sparsity?

By sparsifying activations and the weights, there a lot of zeros that can be skipped to reduce computational time. Does gemmlowp support sgemm instead of gemm or something like a mask ?

If not, how much work would it require to transform the gemm to a masked gemm in gemmlowp?

Thank you in advance,

In general I’m a bit of a skeptic for *runtime* sparsity for optimization. From experience, you usually need to reach at least 80 to 90% sparsity before the extra complexity in the decoding makes it worth it, and that’s very hard to achieve. I’d love to be proved wrong though, so I’d be interested to see any experimental results with gemmlowp.

Thanks for your reply Pete.

On mobile one would like to avoid multiplications as they are relatively expensive compared to additions, so I was wondering if simply adding an if-not-zero-do-multiply strategy would save energy. There are several recent papers that propose efficient inference engines based on aggressive quantization and skipping operations on zero-values (here’s an example https://arxiv.org/pdf/1610.00324v1.pdf), I was wondering if something like that is/will be internally supported by gemmlowp.

(it’s not very hard to achieve ~80% sparsity on fully-connected layers with a slight accuracy drop, activations can also be very sparse by using ReLuT)

Hi, you absolutely contribute nice work! I really thanks about it.

I have one question. Is there any diff between integer quantization and decimal(fixed-point like 8bit-wide, 4bit for fraction bit) quantization? I’m try to make dynamic fixed point approximation on your work. But It seems only integer quantization.

It’ll be really helpful anyone reply here!

thanks

I think when the fix-point is mentioned, people think it has fractional values. For example, the bits at the left side of the decimal point stand for 0.5, 0.25,0.125. But integer numbers has not fractional part.

I tired to run quantize_graph on windows (without GPU), I am getting bellow error

“No OpKernel was registered to support Op ‘QuantizeV2’ with these attrs. Registered devices: [CPU]”.

Does this mean the quantization of a graph is only supported on a device with GPU?

Thanks.

Hi, I have the same issue. Did you solved this problem? How did you do this?

Hi Pete, great blog. I had some issue with finding the quantize_graph tool, it seems that the path changed in the latest recent releases.

The new line is:

bazel build tensorflow/tools/quantization:quantize_graph

Cheers

I have gotten an issue where I cannot do the bazel build step. I searched through the files and found that what I am looking for is likely in the tensorflow/contrib/quantization folder, but there is no section for tools within that folder. my quantization folder only has pycache python and an init.py file. Any one else run into this and have an explanation.

The error it is throwing is no such package ‘tensorflow/contrib/quantization’: BUILD file not found in package path

I do not see any quantization options within the tools folder

Hi I have the same issue.

The issue is when adding the

//tensorflow/contrib/quantization:cc_ops

//tensorflow/contrib/quantization/kernels:quantized_ops

dependencies in the BUILD file of label_image, right?

Pingback: CNN Network quantization 阶段总结 | 神刀安全网

Hi Pete,

Thanks for your nice work, it’s very practical tool for compressing neural networks and gaining much acceleration. I am very interested in neural networks acceleration on FPGA. Can you give more details about “QuantizeDownAndShrinkRange operator” and the latest advances in quantize CNN models in TensorFlow? Thanks~

If you look at https://github.com/tensorflow/tensorflow/tree/master/tensorflow/contrib/quantize you can see the latest scripts, and we’re hoping to have more documentation about this soon.

Hi we want to quantinze cnn that trained in kaldi… how we can use this tensorflow? what input does it takes? for example we have binary file of nnet, or final.feature_transform?

Hi Pete. What a great contribution and nice work!

I have a question about trying to deploy this quantization scheme in mobile/portable devices.

Compared to Song Han’s “code books” approach, this quantization has the advantage of being able to quickly convert from floating-point to fixed-point. Even if I perform non-linear quantization like “code books”, would not it be more efficient to run it on the server and deploy only the converted fixed-point parameters to mobile/portable devices for high accuracy?

Or I am a student who is still studying, so I don’t know the big picture. I wonder what I’m missing.

Thank you. Best Regards.

Pingback: Machine Learning & Deep Learning Blogs to Follow – Qamar-ud-Din

Pingback: tensorflow二次开发 - 大专栏

Pingback: Get started with machine learning on Arduino | FutureLab3D

Pingback: Accelerating TensorFlow Inference with Intel Deep Learning Boost on 2nd Gen Intel Xeon Scalable Processors – Edge AI and Vision Alliance

Pingback: Quantization Screencast « Pete Warden's blog

Pingback: Neural Networks: Simulate the working of Dynamic Fixed Point representation of the weights on hardware - PhotoLens

This is a rather informative article on quantizing neural networks using TensorFlow. It’s interesting to see how this process can be particularly beneficial for applications on low-power or mobile devices.

The ability of neural networks to produce accurate results despite the noise introduced by low-precision calculations is quite intriguing. While training might require higher precision, it seems reasonable that inference doesn’t necessitate such high precision. Quantization appears to be an efficient solution to reduce the size of models and speed up computations with minimal impact on accuracy.

What is the impact on the model’s accuracy when we switch from 32-bit to 8-bit calculations? Surely, there must be some trade-off.

The article refers to the accumulation of biases due to rounding errors. How significant is this issue in practice, and are there specific strategies to counteract this?

Pingback: TinyML with ML Inference on Arduino – Not Rocket Science!