I wanted a setup for web development that matched my production server, but let me do local development on my MacBook Pro. I’m a big fan of Parallels running Windows, so I set out to get Red Hat Fedora server running too. My requirements were that I should easily be able to install extensions that aren’t standard on OS X PHP like IMAP and GD, and that I could save files to my local drive and immediately run them through the server without having to copy anything.

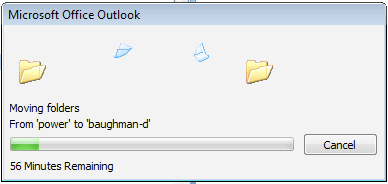

Getting started with Fedora was painless thanks to this ready-made Parallels disk image. I downloaded that and it loaded immediately with no setup required. I then ran yum to update the system to the latest patches, and I was in business. I had to remove yum-updatesd before I could open any of the desktop software installer applications, but once that was working I could run the Add Software application. There I chose the parts I needed, like mysql PHP and other random web development additions.

After that was going, I created a test.php file containing <?php phpinfo(); ?> and placed it in /var/www/html/. Loading up Firefox inside Fedora and pointing it to http://localhost/test.php gave me the expected information dump. Everything was going smoothly, so I should have known there was trouble in store.

The only remaining part was adding a link back to my OS X filesystem within Linux, so that Apache could access my files without having to do any copying. Parallels offers a great bridge between the Windows and mac file stores, but I couldn’t find anything that easy for Fedora. What I did run across was sshfs, which uses fuse and ssh to create a virtual folder inside Linux that points back to a directory on a system accessed through the network.

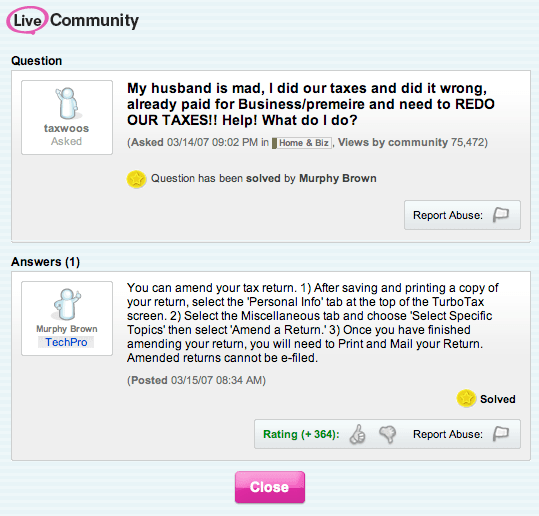

I went through all the steps to get that set up, but spent a very long time getting 403 Permission Denied errors every time I tried to access OS X files through Apache. After a lot of hair-pulling, I figured out how to make everything play nicely together. It involves loosening the permissions model, so I don’t recommend doing this on a production server for security reasons, but it should be fine for local development. Here’s the steps:

- On Linux, make sure you have sshfs, and you’ve added your current Linux user to the fuse group with su -c ‘gpasswd -a <username> fuse’

- Again on Fedora, get fuse running with service fuse start and add it to the startup sequence with echo ‘service fuse start’ >> /etc/rc.local

- On OS X, go to Preferences->Sharing and turn on remote login. Make a note of the IP number it displays on that window.

- To test the remote login, in a Linux terminal window do ssh <mac user name>@<mac IP number>, eg ssh petewarden@10.0.1.196 . If this doesn’t work, you’ll need to stop and check the IP number and your Parallels network setup.

- Now you can try to set up the filesystem connection through SSH. On your Fedora terminal, type sshfs -o allow_other,default_permissions <mac user name>@<mac IP number>:<Path to your mac folder> /var/www/html/testbed , eg sshfs -o allow_other,default_permissions petewarden@10.0.1.196:/Users/petewarden/Sites/testbed /var/www/html/testbed . The magic bit here are the extra options for allowing other users to access the folder. Without these, the apache user won’t be able to read the files and you’ll get the 403 errors. Be warned though, this is necessary to fix them, but you’ll need to follow the steps below to remove all the permission problems.

- Because the files show up with a user ID and group ID that’s unknown to the Linux filesystem, Apache’s strict security settings will refuse to display them. To get around this, you need to make the security settings less restrictive. First you’ll need to disable Security Enhanced Linux, aka SELinux. To do this through the GUI, go to the Security preferences and click the SELinux tab. Then choose "Disabled" from the dropdown menu. You could also do this on a per-program basis, but I wanted to keep it simple.

- Next you need to remove suEXEC, another security feature of Apache. To do this just move the file itself, on my system at /usr/sbin/suexec to another location eg mv /usr/sbin/suexec /usr/bin/suexec_disabled

- Finally restart apache with service restart httpd and try navigating to one of your pages. With any luck you’ll now be able to save from OS X and immediately see them in Firefox within Fedora.

One of my main reasons for this setup was to easily install extensions. After going through these steps I was able to just run yum install php-gd to get the gd graphics library, a project that had previously taken me hours of fiddling on OS X even with fink.

Update: I’m now using the Parallels Linux instance directly from OS X. To do that I had to enable HTTP in the firewall settings on Fedora, and then ran ifconfig to work out the IP address that it had acquired. After that I can navigate to http://x.x.x.x/ in my OS X copy of Firefox and access my files, without having to ever switch to the Linux desktop.