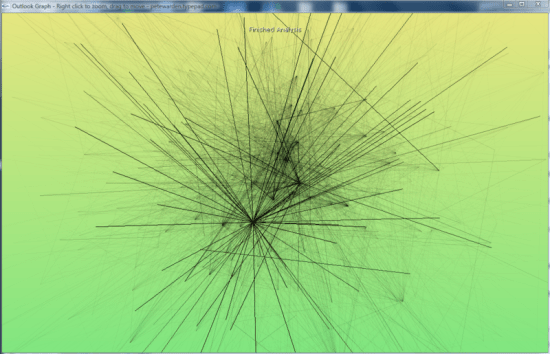

If your application needs a large graph, as I did with my Outlook mail viewer, the first thing you should do is check for an existing library that will work for you. Matt Hurst has a great checklist for how to evaluate packages against your needs. If you can find one off-the-shelf, it’ll save a lot of time.

If you need to write your own, the best way to start is absorbing this wikipedia article on force-based layout algorithms. It has pseudo-code describing the basic process you’ll need to run to arrange your graph. It boils down to doing a physics-based simulation of a bunch of particles connected by springs, that repel each other when they get close. If you’ve ever written a simple particle system, you should be able to handle the needed code.

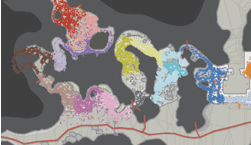

It’s pretty easy to get something that works well for small numbers of nodes, since the calculations needed aren’t very intensive. For larger graphs, the tricky part is handling the repulsion, since in theory every node can be repelled by every other node in the graph. This means the naive algorithm loops over every particle every time when calculating the repulsion for each in the graph, which gives O(N-squared) performance. The key to optimizing this is taking advantage of the fact that most nodes are only close enough to be repelled by a few other nodes, and creating a spatial data structure before each pass so you can quickly tell which nodes to look at in any particular region.

I ended up using a 2D array of buckets, each about the size of a particle’s repulsion fall-off distance. That meant I could just check the immediately neighboring buckets that a particle was in to find others that would affect it. The biggest problem was keeping the repulsion distance small enough that the number of particles to check was low.

In general, tuning the physics-based parameters to get a particular look is extremely hard. The basic parameters you can alter are the stiffness of the springs, the repulsion force, and the system’s friction. Unfortunately, it’s hard to know what visual effect changing one of them will have, they’re only indirectly linked to desirable properties like an even scattering of nodes. I would recommend implementing an interface that allows you to tweak them as the simulation is running, to try and find a good set for your particular data. I attempted to find some that worked well for my public release, but I do wish there was a different algorithm that was based on satisfying some visually-pleasing constraints as well as the physics-based ones. I did end up implementing a variant on the spring equation that repelled when the connection was too short, which seemed to help reduce the required repulsion distance, and is a lot cheaper to calculate.

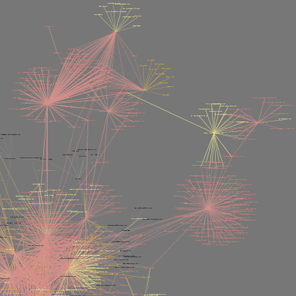

A fundamental issue I hit is that all of my nodes are heavily interconnected, which makes positioning nodes so that they are equally separated an insoluble problem. They often end up in very tight clumps in the center, since many of them want to be close to many others.

Another problem I hit was numerical explosions in velocities, because the time-step I was integrating over was too large. This is an old problem in physics simulations, with some very robust solutions, but I was able to get decent behavior with a combination of shorter fixed time steps, and a ‘light-speed’ maximum velocity. I also considered dynamically reducing the time-step when large velocities were present, but I didn’t want to slow the simulation.

I wrote my library in C++, but I’ve seen good ones running in Java, and I’d imagine any non-interpreted language could handle the computations. All of the display was done through OpenGL, and I actually used GLUT for the interface, since my needs were fairly basic. For profiling, Intel’s VTune was very helpful in identifying where my cycles were going. I’d also recommend planning on implementing threading in your simulation code from the start, since you’ll almost certainly want to allow user interaction at a higher frequency than the simulation can run with large sets of data.