One of Apple’s secret weapons is its fantastic bug-tracking process. There’s whole systems and departments devoted to crash reports (yes, we do read all those comments, including the swearing in obscure languages), external and internal bug reports. What really made the whole thing work was the quality of the descriptions, thanks to the training we all received. It’s important because a well-described bug will be given the right priority, go to the right engineer, be understood quickly, and can be tested easily to be sure it really is fixed.

If you’re looking for an effective way of improving your own software, it’s hard to beat filing good bugs. Here’s what you need:

Title: Make it short but specific and descriptive. "Crash when closing save dialog" is better than "Save error".

Summary: This should be two or three sentences that cover the information that the person who has to assign the bug needs to know. Usually there’s someone non-technical or semi-technical who works out which engineer should look at it. A good summary will give them the information they need to get it to the person who can fix it first time.

Reproduction Steps: Probably the hardest part to get right is describing what someone has to do to see the problem on their machine. If possible you should try to recreate the problem yourself, noting the steps you take as you do it. If it doesn’t happen again, then that’s important information for the report too, and you should try to describe what you remember doing before the first occurrence.

If you do have luck getting it to happen again, note down in numbered, explicit steps exactly what it takes, eg:

- Open up the application

- Go to the File main menu, then choose Save

- Click on the close icon

It’s tempting to put something like "Try to save, and then close the dialog" for a process that seems as simple as this, but I guarantee that the recepient will use Command+S instead of the menu command, or the keystroke for closing a window, or not use a fresh start of the application, or will have some other variation that happens to avoid the crash.

For really tricky problems, sometimes even doing a screen capture of yourself reproducing the bug can be invaluable, in case there’s something subtle about your actions that triggers the issue. One of the hardest I hit turned out to only occur when the sub-windows of the application were arranged in a certain pattern! Even a saved file that prebakes a lot of the steps can save a lot of time.

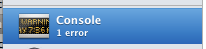

Results: In this case it’s pretty obvious, but a lot of bugs may take some domain knowledge to understand what the expected result is, and it’s also helpful to spell out exactly what you’re seeing. Screenshots can be your friend here, it’s often easier to show the bad results than describe them in words.

Regression: If you’ve tried other versions of the application or service, or run it on other operating systems, the results can be an important clue to the engineer about where in the code it’s going wrong.

Notes: Anything else you think is useful should be in here, such as links to similar bugs or your contact information. It’s good to keep this at the end so that the final engineer assigned to the problem can get some in-depth information, but it’s easy for the people routing the bug through the system to get a clear overview from just the first few sections.

As a lazy programmer, I use other people’s code whenever possible. That means I spend a lot of time filing bugs myself, so if you want to see me eating my own dog food, here’s an example of one I filed against OpenCalais:

PHP demo rendering glitch in Firefox, Safari Javascript error

Summary:

Running the PHP demo in Firefox on OS X draws an extra frame over part

of the results. The results page suffers a Javascript error and only

displays an error message in Safari.

Reproduction steps:

- Download CalaisPHPDemo_08May29.zip

- Unzip onto a folder on your server

- Copy JSON.php from src/pear/ to src/public

- Navigate to src/public/CalaisPHPDemo.html in Firefox 2.0.15 or Safari Version 3.1.1 on OS X 10.5.3 (I have a version online at http://funhousepicture.com/calaisdemo_original/src/public/CalaisPHPDemo…. )

- Copy and past the text from the example file test/text1.txt (asteroid news story) into the main text box

- Leave the format pulldown on Document Viewer Style

- Click on the Show Results button

Results:

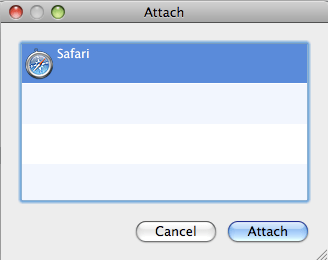

On Firefox the document text shows up, but there’s a pair of scroll

bars partially obscuring the top portion. I’ve uploaded a screenshot as

http://funhousepicture.com/calais_firefox_result.png

On Safari, the result page is just the logo and a message stating ‘Unsupported Document’. The screenshot is http://funhousepicture.com/calais_safari_result.png

I’d expect to see the results page rendered as it does in Internet Explorer.

Regression:

I was able to run the demo with no problems on Internet Explorer 7 on

Windows Vista. I don’t see the scroll-bar issue on Firefox 2.0.14 on

Vista either.

Notes:

By poking around with Firebug, I determined that the bogus scrollbars

came from the CalaisJSONInfo element with its style set to ‘visibility:

hidden;’, which still affects layout, whereas ‘display:none;’ makes it

truly vanish. This may or may not be the correct fix depending on your

intent, but it does remove the rendering glitch.

The Safari error was a bit more involved. The immediate cause was an

exception in the initHighlight() Javascript, but it was unclear why

that was happening. After some debugging with Drosera I found a couple

of places where the code didn’t sit well with Safari’s JS host that

caused errors, notably a use of insertAdjacentText() which is

unsupported in webkit, and a null check that for some reason caused an

error. After working around those I was able to see the result document

successfully.