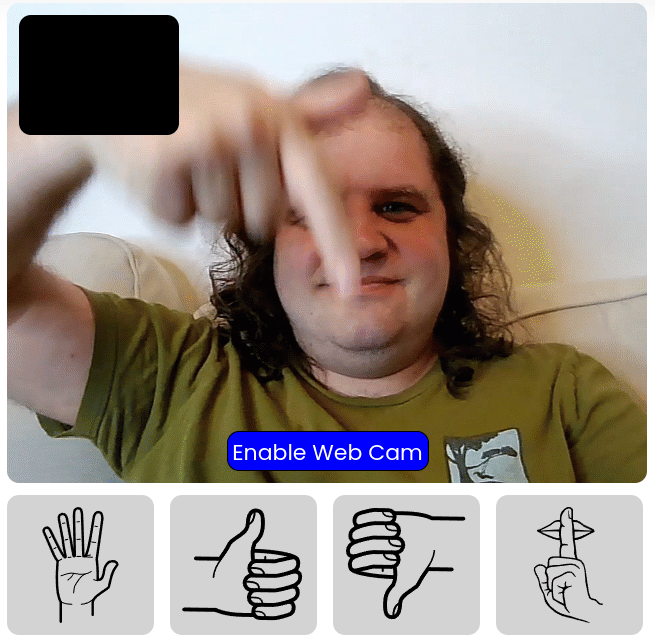

If you’ve heard me on any podcasts recently, you might remember I’ve been talking about a Gesture Sensor as the follow up to our first Person Sensor module. One frustrating aspect of building hardware solutions is that it’s very tough to share prototypes with people, since you usually have to physically send them a device. To work around that problem, we’ve been experimenting with compiling the same C++ code we use on embedded systems to WASM, a web-friendly intermediate representation that runs in all modern browsers. By hooking up the webcam as an input, instead of the camera, and displaying the output dynamically on a web page, we can provide a decent approximation to how the final device will work. There are obviously some differences, the webcam is going to produce higher-quality images than an embedded camera module and the latency will vary, but it’s been a great tool for prototyping. I also hope it will help spark makers’ and manufacturers’ imaginations, so we’ve released it publicly at gesture.usefulsensors.com.

On that page you’ll find a quick tutorial, and then you’ll have the opportunity to practice the four gestures that are supported initially. This is not the final version of the models or the interface logic, you’ll be able to see false positives that would be problematic in production for example, but it should give you an idea of what we’re building. My goal is to replace common uses of a TV remote control with simple, intuitive gestures like palm-forward for pause, or finger to the lips for mute. I’d love to hear from you if you know of manufacturers who would like to integrate something like this, and we hope to have a hardware version of this available soon so you can try it for your own projects. If you are at CES this year, come visit me at LVCC IoT Pavilion Booth #10729, where me and my colleagues will be showing off some of our devices together with Teksun.