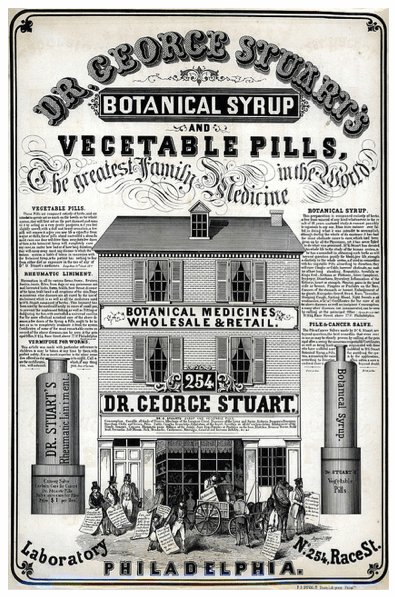

Photo by Library Company of Philadelphia

There's a whole new world of data emerging, along with cheap and easy tools for processing it. Unfortunately a lot of snake-oil salesmen have spotted this too, and are now eagerly mis-using 'big data' in their pitches. I was reminded of this when I read the recent Wall Street Journal article on health insurance companies looking at social network data. There's been detailed demographic and purchase data available for every household in the US for decades, so why haven't they used that existing data if the approach is as effective as the many hopeful consultants claim?

It's because data is powerful but fickle. A lot of theoretically promising approaches don't work because there's so many barriers between spotting a possible relationship and turning it into something useful and actionable. Russell Jurney's post on Agile Data should give you a flavor of how long and hard path from raw data to product usually is. Here's some of the hurdles you'll have to jump:

Acquisition. Few data sets are freely available, and even if you can afford the price, the licensing terms are likely to be restrictive. Even if you have that sorted, you're at the mercy of the providers unless you're gathering it yourself. If they see you making money, in the best case they'll ramp up their price, and in the worst case they'll cut you off either for reputational reasons or so they can offer a similar service themselves. Can you imagine the outcry if insurance companies penalize donors to cancer charities, as the article postulates? Nobody will want to provide data with that sort of reputational risk looming.

Coverage. No matter how good your analysis results are, if you only have source data on 10% of the targets the product will be useless.

Over-determination. Age, income and industry probably do a pretty effective job of predicting your chances of becoming overweight. Is going to the trouble of spotting that somebody's buying exercise equipment really going to improve your prediction enough to justify the expense of testing, implementing and tuning the process?

Poor correlations. The data may just not carry the answers you need. This is more common than you'd think, many relationships that seem like they should be rock-solid don't pan out when you test them against reality.

Noise. A lot of information gets lost in the noise of real-world data sets. I think of this as the Megan Fox problem; so many Facebook users were fans of her that she appeared in almost every region's top 10 list and I had to run normalizing steps to remove her malign influence on my results. That of course degraded the overall fidelity of the conclusions.

So what's the solution? As Russell says, you need a whole new approach to prototyping, focused on building something that works with actual data and lets you interactively explore what works in reality, versus the relationships you hope are there from thought experiments. At least the Aviva study in the article did try out their techniques on 60,000 records, though the report left me with lots of unanswered questions.

Next time somebody's trying to sell you on the awesomeness of their new data technique, ask to see a prototype. If they haven't got that far, it's snake oil.

This was really very interesting for me. I have been following this for a long time and I really think I have learned so much! Great post.