I’ve spent a lot of time at conferences talking about all the wonderful things that are now possible using machine learning on embedded devices, but as Stacey Higginbotham pointed out at this year’s TinyML Summit, despite all the potential there haven’t been many shipping applications. My experience is that companies like Google with big ML teams have been able to deploy products successfully, but it has been a lot harder for teams in other industries. For example, when I visited an appliance manufacturer in China and pitched them on the glorious future they could access thanks to TensorFlow Lite Micro, they told me they didn’t even know how to open a Python notebook. Instead, they asked if I could just give them a voice interface, or something that told them when somebody sat down in front of their TV.

I realized that the software framework model that had worked so well for TensorFlow Lite adoption on phone apps didn’t translate to other domains. Many of the firms that could most benefit from ML just don’t have the software engineering resources to integrate a library, even with great tools like Edge Impulse that make the process much easier. As I thought about how to make on-device ML more widely accessible, I realized that providing ML capabilities as small, cheap hardware modules might be a good solution. This was the seed of the idea that became the ML sensors proposal, now available as a paper on arXiv.

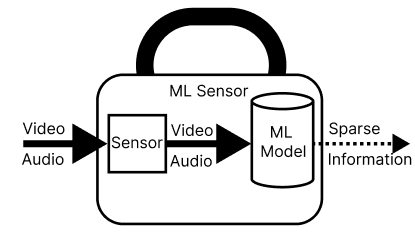

The basic idea is that system builders are already able to integrate components like sensors into their products, so why not expose some higher-level information about the environment in the same form factor? For example, a person sensor might have a pin that goes high when someone is present, and then an I2C interface to supply more detailed information about their pose, activities, and identity. That would allow a TV manufacturer to wake up the display when someone sat down on the couch, and maybe even customize the UI to show recently-watched shows based on which family members are present. All of the complexity of the ML implementation would be taken care of by the sensor manufacturer and hidden inside the hardware module, which would have a microcontroller and a camera under the hood. The OEM would just need to respond to the actionable signals from the component.

At the same time as I was thinking about how to get ML into more peoples hands, I was also worried about the potential for abuse that the proliferation of cameras and microphones in everyday devices enables. I realized that the modular approach might have some advantages there too. I think of personal information as toxic waste, because any leaks can be highly damaging to individuals, and to the companies involved, and there are few data sources that have as much potential for harm as video and audio streams from within peoples’ homes. I believe it’s our responsibility as developers to engineer systems that are as leak-resistant as possible, especially if we’re dealing with cameras and microphones. I’d already explored the idea of using Arm’s TrustZone to keep sensitive data contained, but by moving the ML processing off the central microcontroller and onto a peripheral, we have the chance to design something that has a very small attack surface (because there’s no memory shared with the rest of the system) and can be audited by a third-party to ensure any claims of safety are credible.

The ML sensor paper brings all these ideas together into a proposal for designing systems that are easier to build, and safer by default. I’m hoping this will start a discussion about how to improve usability and privacy in everyday systems, and lead to more practical prototyping and experimentation to answer a lot of the questions it raises. I’d love to get feedback on this proposal, especially from product designers who might want to try integrating these into their systems. I’m looking forward to seeing more work in this area, I know I’m going to be busy trying to get some examples up and running, so watch this space!

Regarding microphones, I’ve been thinking a device to monitor enviromental sound pressure level around enterprises for the sake of comparing it to legal limits and issuing alerts (or charges…). I guess this could be one application for such a device. It’d be a cheaper and connected solution.

Pingback: Why cameras are soon going to be everywhere « Pete Warden's blog

Pingback: Links 03/07/2022: GNU/Linux Steam Surge, GitHub Breaks the Law | Techrights

Pingback: Launching Useful Sensors! « Pete Warden's blog

Pingback: Machines of Loving Understanding « Pete Warden's blog