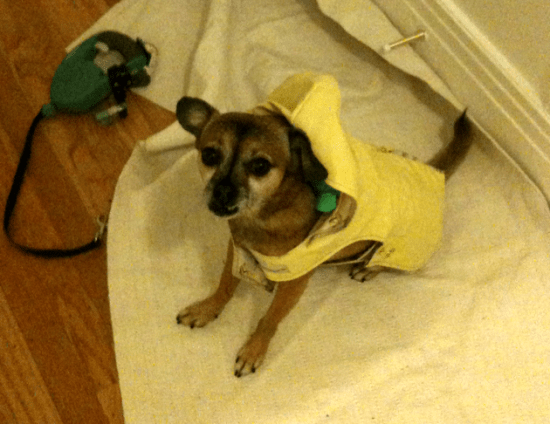

My dog Thor hates getting wet, but even when there's rain lashing against the windows he still starts off dancing in circles when it's time for his walk. It's only when I pull out his yellow rain jacket that he slumps and stares at me mournfully. He seems convinced that if I just left the jacket off, the rain would go away.

Much as I try and convince him of the error in his logic, he's unmoved, and it's hard to blame him. Humans will happily swallow studies that use the weasel word 'link' to claim something that is associated with an outcome is its cause. Does obesity spread through your friendships? No, you just share the same risk-factors as your friends.

As the Big Data revolution gives us more and more data to play with, we'll find many more suggestive correlations like these appearing. Our whole mental architecture is about seeing meaningful patterns, even if we're staring at random phenomena like clouds in the sky. How much these mirages matter depends on how we want to apply them –

Prediction

Sometimes you don't care about whether something causes an outcome, you just want some early warning of what that outcome will be. Thor knows it will be wet when he sees the raincoat. The main danger is that the two variables aren't actually dependent in any way, they just happen to have been moving in an apparently synchronized way recently. The more variables you have to compare the more likely these sort of false correlations are, so expect a lot of them with Big Data.

If you're going to rely on a correlation to predict outcomes, you need at least a plausible story for the mechanism behind the correlation, and ideally multiple independent data sets that back it up.

Reaction

If I notice I'm struggling to get Thor out of the front door, then maybe I'll hide the rain jacket until we're in the porch. Thor's resistance means that he can no longer use the raincoat as a reliable signal of rain. The only reason to make a prediction is to take some action, and those actions may destroy the correlation. This is a painfully common problem in economics, and is usually expressed as Goodhart's Law: "once a social or economic indicator or other surrogate measure is made a target for the purpose of conducting social or economic policy, then it will lose the information content that would qualify it to play such a role".

This means that even if you've found a correlation with predictive power, you have to constantly measure its effectiveness, since the very act of relying on it as a guide may degrade its usefulness.

Control

I half-expect to get up one morning and discover that Thor's eaten the raincoat, in the hope of bringing back the sun. Once we notice a correlation, it's easy to convince ourselves and others that it's actually causing the outcome. Humans love stories, and stories have their own rules. If X happens, followed by Y, narrative logic requires that X caused Y. That makes it simple to persuade other people that they're seeing cause and effect rather than correlation, without really having to prove it.

The only reliable way I know to figure out whether you really can affect outcomes is by experiment, so before you put time or money behind an attempt, require a prototype. If the guy or girl trying to persuade you to take action can't show a small-scale proof-of-concept, then they might as well be trying to sell you a bridge in Brooklyn. Even if they show compelling results, the Hawthorne Effect may be kicking in, but at least you've got some weak proof.

If you want to be effective in a world awash with data, it pays to be skeptical of correlations, since you'll be seeing a lot more of them over the next few years.

Pingback: Hello, Puppy – SF Quarantine