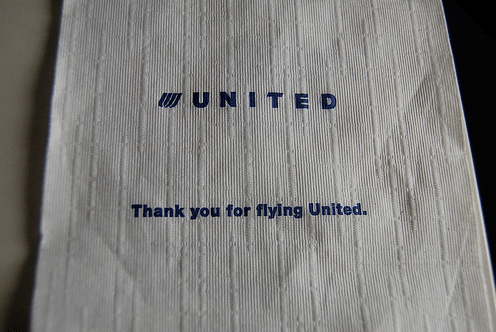

Default OpenGL bilinear sampling on high-contrast edges

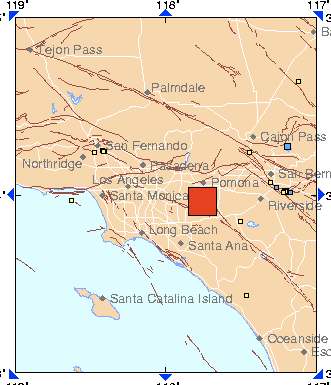

Using a shader to manually do the sampling in a linear color space

I recently ran into someone who was frustrated by the amount of stair-stepping that appeared along the edge of the fonts she was rendering in OpenGL. This reminded me of some of the hard-won lessons our team at Apple discovered trying to use GPUs for professional content creation, so I had to throw together a demonstration of one of the little-known tricks to improving your resampling quality. This one works especially well on high-frequency textures, like font letters with sharp edges.

All your image data is stored as color values between 0 and 255. The hard part to wrap your head around is that these don’t correspond well to the actual number of photons that will be output for that color by your monitor. Because the human eye evolved to see panthers lurking in the undergrowth, we’re a lot more sensitive to small changes in dark colors. Because we need to pack in as much detail as possible into those 256 slots, most of those values are predominantly allocated to the dark end of the scale. This is done using a gamma curve, where the physical measurement of the light’s brightness (produced from a camera CCD array for example) is put through a function to map it onto the output values written into the image file.

It took me a long time to wrap my head around gamma, it’s a very slippery concept, and if you really want to dig into it I recommend Charle’s Poynton’s FAQ to learn more. The main thing you need to understand for what follows to make sense is that a grey value of 128 isn’t half as bright as 255, it’s only a quarter as bright. Why does this matter? Because the bilinear filtering hardware that’s used for texture mapping just averages all the values together. This means that a pixel sitting on the edge of a completely white (255) pixel, and a completely black (0) pixel ends up with a value of 128. This makes it appears a lot darker than it should, and this bias greatly increases the effect of stair-stepping.

Luckily with modern hardware it’s possible to bypass the default bilinear sampling and write your own in a pixel shader. This means you can convert the input color values into something closer to the physical measurements, do the averaging calculations and then convert them back to gamma space for output. To be totally correct you’d need to use an API like ColorSync to handle that conversion, using the details of your particular monitor, but that’s too heavy-weight for a simple shader. As it happens doing a simple square root (on the normalized 0.0 to 1.0 rather than 0 to 256 values) gets you very close to the original linear space in most cases, so I wrote my example using that approximation.

Here’s the source code, with build scripts for OS X and Linux: Download linearexample.zip

You’ll need GLUT, which should be there by default on the mac, but you may have to install FreeGlut on Linux. Once built, it displays a texture containing 1 pixel wide white lines surrounded by black, slowly rotating to show off the staircasing. Every second it switches back and forth between the default bilinear filtering and my custom shader working in linear space, so you can see the difference it makes. If you’re using this for full 3D work, you’ll need to insert a perspective divide by w for the texture coordinates before you do the texture samples. It also doesn’t handle mip-maps, and another way to improve the quality would be to use a different sampling function rather than just linearly averaging.

Many thanks to both Greg Abbas and Garrett Johnson for teaching me everything I know about color and resampling theory. What I do understand is purely thanks to their patient explanations.