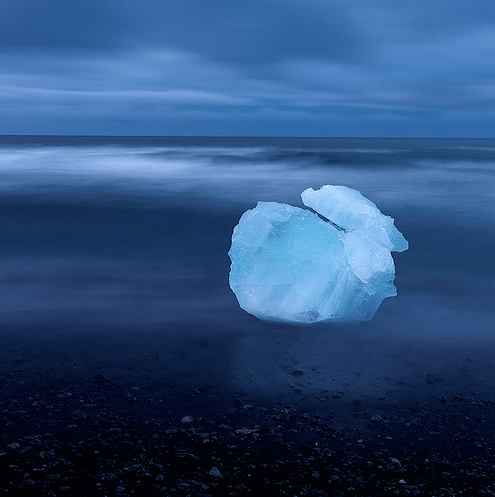

Photo by WallyG

There's a lot of interesting data out on the web that's locked up in web pages, with no API access to make it machine-readable. I'm particularly interested in phone records; just like emails, IMs and tweets they form a detailed shadow of your social network. To tackle automatically grabbing my phone call history from the AT&T site I turned to Selenium, originally built as a testing tool but also well-suited to screen-scraping on sites with complex login procedures.

To get started you can install the Selenium IDE in Firefox and record the steps you'd manually take to log in and get to the screen you're interested in. Selenium turns those actions into a script you can manually edit and replay. In my case I needed to add some 'type' commands to enter the phone number and password since those weren't captured. Here's the resulting script, you should be able to run this on your own account to download your call details in a csv file once you've added your own details:

What's really handy is that you can use Selenium Remote Control to then re-run that same script from your server, using PHP or other popular languages. It's a bit of a hack because it still requires windowing capabilities so it can run within Firefox and a proxy server process to insert the needed code into external pages, but once it's running it's an incredibly flexible way to deal with constantly changing websites.