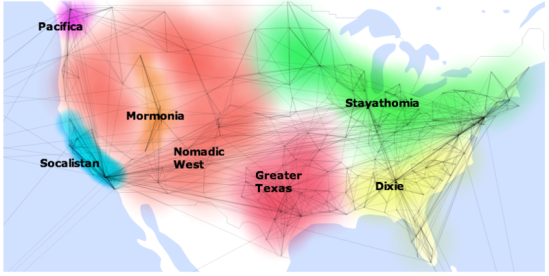

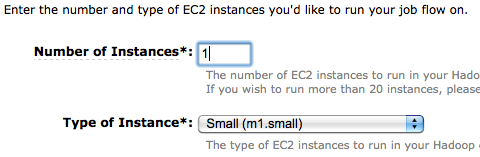

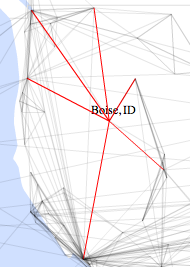

As I’ve been digging deeper into the data I’ve gathered on 210 million public Facebook profiles, I’ve been fascinated by some of the patterns that have emerged. My latest visualization shows the information by location, with connections drawn between places that share friends. For example, a lot of people in LA have friends in San Francisco, so there’s a line between them.

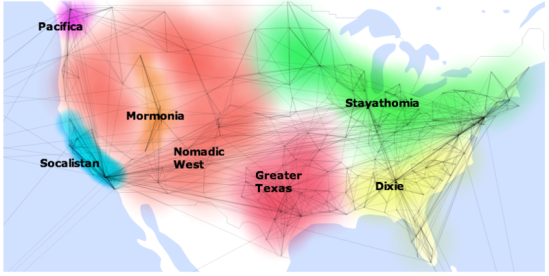

Looking at the network of US cities, it’s been remarkable to see how groups of them form clusters, with strong connections locally but few contacts outside the cluster. For example Columbus, OH and Charleston WV are nearby as the crow flies, but share few connections, with Columbus clearly part of the North, and Charleston tied to the South:

Some of these clusters are intuitive, like the old south, but there’s some surprises too, like Missouri, Louisiana and Arkansas having closer ties to Texas than Georgia. To make sense of the patterns I’m seeing, I’ve marked and labeled the clusters, and added some notes about the properties they have in common.

Stayathomia

Stretching from New York to Minnesota, this belt’s defining feature is how near most people are to their friends, implying they don’t move far. In most cases outside the largest cities, the most common connections are with immediately neighboring cities, and even New York only has one really long-range link in its top 10. Apart from Los Angeles, all of its strong ties are comparatively local.

In contrast to further south, God tends to be low down the top 10 fan pages if she shows up at all, with a lot more sports and beer-related pages instead.

Dixie

Probably the least surprising of the groupings, the Old South is known for its strong and shared culture, and the pattern of ties I see backs that up. Like Stayathomia, Dixie towns tend to have links mostly to other nearby cities rather than spanning the country. Atlanta is definitely the hub of the network, showing up in the top 5 list of almost every town in the region. Southern Florida is an exception to the cluster, with a lot of connections to the East Coast, presumably sun-seeking refugees.

God is almost always in the top spot on the fan pages, and for some reason Ashley shows up as a popular name here, but almost nowhere else in the country.

Greater Texas

Orbiting around Dallas, the ties of the Gulf Coast towns and Oklahoma and Arkansas make them look more Texan than Southern. Unlike Stayathomia, there’s a definite central city to this cluster, otherwise most towns just connect to their immediate neighbors.

God shows up, but always comes in below the Dallas Cowboys for Texas proper, and other local sports teams outside the state. I’ve noticed a few interesting name hotspots, like Alexandria, LA boasting Ahmed and Mohamed as #2 and #3 on their top 10 names, and Laredo with Juan, Jose, Carlos and Luis as its four most popular.

Mormonia

The only region that’s completely surrounded by another cluster, Mormonia mostly consists of Utah towns that are highly connected to each other, with an offshoot in Eastern Idaho. It’s worth separating from the rest of the West because of how interwoven the communities are, and how relatively unlikely they are to have friends outside the region.

It won’t be any surprise to see that LDS-related pages like Thomas

S. Monson, Gordon

B. Hinckley and The Book of Mormon are at the top of the charts. I didn’t expect to see Twilight showing up quite so much though, I have no idea what to make of that! Glenn Beck makes it into the top spot for Eastern Idaho.

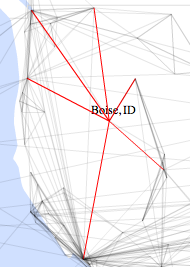

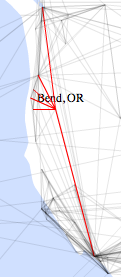

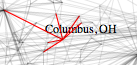

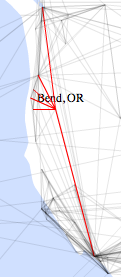

Nomadic West

The defining feature of this area is how likely even small towns are to be strongly connected to distant cities, it looks like the inhabitants have done a lot of moving around the county. For example, Boise, ID, Bend, OR and Phoenix, AZ all have much wider connections than you’d expect for towns their size:

Starbucks is almost always the top fan page, maybe to help people stay awake on all those long car trips they must be making?

Socalistan

Sorry Bay Area folks, but LA is definitely the center of gravity for this cluster. Almost everywhere in California and Nevada has links to both LA and SF, but LA is usually first. Part of that may be due to the way the cities are split up, but in tribute to the 8 years I spent there, I christened it Socalistan. Californians outside the super-cities tend to be most connected to other Californians, making almost as tight a cluster as Greater Texas.

Keeping up with the stereotypes, God hardly makes an appearance on the fan pages, but sports aren’t that popular either. Michael Jackson is a particular favorite, and San Francisco puts Barack Obama in the top spot.

Pacifica

The most boring of the clusters, the area around Seattle is disappointingly average. Tightly connected to each other, it doesn’t look like Washingtonians are big travelers compared to the rest of the West, even though a lot of them claim to need a vacation!

So that’s my tour through the patterns that leapt out at me from the Facebook data. This is all qualitative, not quantitive, so I’m looking forward to gathering some numbers to back them up. I’d love to work out the average distance of friends for each city, and then use that as a measure of insularity for instance. If you’re a researcher interested in this data set too, do get in touch, I’ll be happy to share.

Update – I wasn’t able to make the data-set available after all, but if you liked this map, you can now build your own with my new OpenHeatMap project!