Despite the extra talk on OpenSocial there was still some time for open space discussions. The three topics were "OpenSocial vs ClosedPrivate" led by Kevin Marks, "What is Defrag?" with Jerry Michalski and "What are the next generation disruptive technologies?", proposed by Jeff Clavier. I chose to go to the last one, since I was interested to hear what people were expecting to see. People were throwing out interesting ideas and challenges incredibly fast, and I’ve tried to capture them here.

Matthew Hurst of Microsoft kicked off the discussion with his concern that lack of trust that a service will be there next week was hindering the adoption of new technologies. He doesn’t want us to be focusing on changing people’s behavior, he thinks the future will be in simpler clients.

David Cohen would just like his identity to be portable across all the systems. Matt was concerned that this would make information leakage a much bigger problem.

Jeff’s big question was about what the friend relationship means once you start doing that? The relationship is all about context, and your myspace friends won’t be the same as your linkedin contacts. There’s also no concept of strong ties versus weak ties.

Seth Levine wanted to know if anyone liked Facebook’s self-reporting mechanism, and gave the example of an acquaintance who marked his friend request with "We hooked up", not realizing the real meaning of the term!

I suggested email analysis as an approach to a better understanding of relationships. Kevin from the Land Grant Universities research access initiative was sceptical that his gmail inbox reflected his strong ties. I countered that I thought that was true for our general life, but that most people’s work inbox’s did correspond fairly well with those ties, since email is still the primary communication tool in many companies.

Ben from Trampoline Systems injected a note of skepticism into the discussion, noting that no weighting information could be added without support from the platforms that own the social network, like Facebook and Linkedin, so it’s futile to discuss it before that’s a reality. A lady (whose name I didn’t catch) pointed out one of the major things missing from networks is the recognition that relationship’s change over time.

Clarence Wooten, CEO of CollectiveX, was concerned that friend overload was becoming as bad as RSS. With so much undifferentiated data coming in, with the same mechanism for both childhood friends and chance acquaintances, it was becoming less useful. Some of the other folks proposed technical solutions for the RSS overload problem, including a couple sold by their own companies.

David Kottenkop(sp?) of Oracle laid down the challenge that computers should be able to figure out the ranking and classification of relationships based on communication patterns. As an editorial comment, this is something I’m convinced is the future, and is the idea behind a lot of what I’ve been working on.

Craig Huizenga of HiDat thought there were basically two options for organizing all this data; direct formal attributes or tagging, either something that’s tricky but has semantic meaning, or something more informal but easier to use. Across the table, someone suggested that what we really needed was another level of hierarchy for tags, so you could tag tags themselves.

Matt Hurst was concerned that a lot of the systems we were working on were susceptible to exploitation by users once they figured them out. For example, tagging is now being used to spoof search engines. The same thing is true for identities, people have very different behaviors on MySpace and LinkedIn, which friends they’ll accept, and what information they’ll reveal. Just as Google has trained us all to use keywords in search, we’ve been trained into certain behaviors by these services. An interesting practical example of a world where universal identities are starting to appear is message boards. Since there’s only a few different forum systems, it’s technically practical to hand-code interfaces to all of them, and allow users to avoid the hassle of repeated manual registrations with different sites.

Doruk pointed out that the social networks had to solve the problem of weighting friends, or die because they become useless. One term thrown around for this was relationship management.

Ben pointed out that we’re a really skewed sample to be talking about this stuff. MySpace is still massive, we’re not the mainstream users. There’s a generation gap here, where we’ve got very different perceptions than the kids of today. Matt mentioned as an example that Myspace users appreciate seeing ads on a site, because they know that means that it’s a free service, and they won’t be ambushed with any charges.

He wanted to know where the monetization of future services would come from? He doesn’t want more ad-led services, he wants to know where the painful problems are, that people will pay us money to solve?

This led me to ask whether the enterprise was the answere? Was that where we could still sell services? Matt’s answered with a maybe, since there’s no possibility of ads there, and there’s mechanisms to force people to use services if someone on the hierarchy decides it’s necessary.

Seth suggested that we all needed to bring our high-falutin’ visions down to something real and concrete. He described his first experience of working in a large corporation, and being astonished to discover that everyone left at 5pm. 90% of people want to go home, not wrestle with new technology in the hope it will eventually make them more productive. He thought NewsGator was a great example of a company taking our fancy technology, and turning it into solutions for everyday problems.

Ben agreed that no one wants to adopt this stuff in a corporation. Matt suggested that the only way forward was to throw some of this technology into firms, and see how people creatively decide to use it.

Beth Jefferson of BiblioCommons jumped in, suggesting that these technoogies brought up a lot of tricky issues. People who aren’t friends-of-friends with a decent number of people end up isolated if there’s heavy use of social networks, you’re just magnifying the effects of cliques. She gave a great quote; "Search is a representative democracy with unfair elections". The same goes for blog postings, we pretend to egalitarian principles, but know that there’s a core oligarchy of highly-influential bloggers.

Jeff brought the discussion back to first principles by asking what the world really needs? Craig suggested a way to deal with the overload of information. Jeff thought that turning off your computer for a week, and seeing what you missed, was a good start.

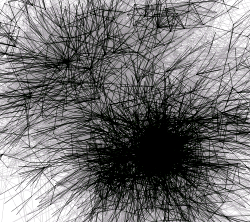

Matt’s concern was how flat data was, and the lack of tools to deal with it in a meaningful way. Seth suggested sorting out some data formats, so we can visualize all this information. Christian of the CAF advisory council suggested that finding experts from amongst our circle of friends was a great unsolved problem.

Greg Cohn of Yahoo gave probably the best comment of the session when he suggested that the biggest problem that faces the world is the lack of clean water for billions of people, not information overload. This led to a really interesting discussion in the session and afterwards about how we change policy to solve these real, people-are-dying, problems. I’m going to need a post on its own to justify, but it’s something that’s I have trouble forgetting; as a community we’re incredibly lucky, and are we really giving back enough to the rest of the world that’s trapped in poverty?